Unifying cloud and carrier networks

This blog post provides a deep-dive into the UNIFY solution for readers that are well-versed in the technologies of SDN, NFV and data centers. Learn about the BiS-BiS solution, applying the narrow-waist design principle, Infrastructure-as-a-Service (IaaS), how to fill the gap between SDN and the ETSI Management and Orchestration framework and diverse infrastructure domains.

One of the most well-known designs following the narrow waist principle is IP. IP defines the bare minimum of functionality (logical addressing, fragmentation and reassembly and connectionless packet forwarding) in its interoperation layer (Layer 3, or IP), and brings the elegance of design diversity into layers above and below. Such a narrow waist design is extremely difficult to change in its minimal functions, but if properly selected, it opens up for spin-off innovation. For example, IP hourglass completed with TCP and UDP allowed innovation in desktops and servers , enabling the almost unlimited number of applications that exist today. Therefore, even researchers proposing novel replacements of IP widely assume a narrow waist point in their architectures.

Narrow waists have also emerged in other areas. The hardware virtualization (virtual machine abstraction) for cloud environments created its own narrow waist in low level compute and storage virtualization. Virtual Machine (VM) abstractions are compatible with legacy operating systems and their valuable ecosystem of applications. In the Software Defined Networking (SDN) domain, the search for the new narrow waist is ongoing. OpenFlow is one emerging approach here. All in all, the community seeks for a narrow waist to combine networking and cloud with the benefits of open innovation and the support of legacies. In UNIFY we seek for a narrow waist control plane definition, which creates a harmonized compute and networking abstraction and virtualization for generic control needs. This enables processing anywhere – flexible service chaining – in the telco service offerings.

UNIFY is an EU-funded FP7 project, which aims to unify cloud technologies and carrier networks in a common orchestration framework. This involves a novel service function chaining control plane on top of “arbitrary” domains, including different Network Function (NF) execution environments, SDN networks or legacy data centers. This way, UNIFY creates a control plane abstraction and control architecture, which fills the gap between the high level orchestration components of the European Telecommunications Standards Institute (ETSI) Management and Orchestration (MANO) framework, and the available infrastructure domains, see Fig. 1 below.

Fig. 1. Unifying cloud and network resources

The key concept of UNIFY is the recurring slicing and aggregation of resources, see Fig. 2. Basically, UNIFY creates a joint Infrastructure as a Service (IaaS) abstraction over network and software resources. The means of control are taken from the SDN abstraction (forwarding behavior control) and from the generalization of compute execution environments. The basic construct of UNIFY is the Big Switch with Big Software (BiS-BiS) resource abstraction, see Fig. 3. The concept combines both networking and compute resource abstractions under a joint programmatic Application Programming Interface (API) – representing the narrow waist control point. The BiS-BiS model allows not only single Compute Nodes (CN) and Network Elements (NE) to be abstracted but any resource topology, as shown in Fig. 4.

Fig. 2. UNIFY: concept of recurring slicing and aggregation

Fig. 3. UNIFY: Big Switch with Big Software (BiS-BiS) abstraction for joint network and compute control – simple view

Fig. 4. UNIFY: BiS-BiS abstraction for a domain of NEs and CNs

As an example, let’s consider the orchestration task of deploying a service function chain comprising of two Virtualized Network Functions (VNFs) between two edge ports. In a traditional orchestration work-flow, the VNFs are assigned to compute resources and only then the network overlay is established –because of the need to know where to connect the overlay, see left of Fig. 5. In the case of joint control, the definition of VNF placement and the definition of the forwarding overlay happen in a single instruction. To further highlight the difference, imagine a multi-level resource virtualization set-up of multiple providers, depicted in Fig. 2. In the case of the traditional (split control) approach, compute orchestration must go all the way through the hierarchy before the network overlay definition can start. That means it’s not possible to consider networking service definitions during the VNF placement processes.

Fig. 5. Exemple service function chain: tradition work-flow and UNIFY's joint control API

However, the single BiS-BiS abstraction is not powerful enough to meet all possible retail control expectations; therefore slices are created using BiS-BiS topologies. That is, an access control provider may be satisfied with a single BiS-BiS view to enable/disable forwarding between VNFs or service access ports, but a content provider may want finer granular control over where its caches are deployed in the proximity of the users. Therefore, in the latter case, for example, a topology of BiS-BiS Nodes must be created, which represents geographical areas of customer clusters. Note that the physical details of the resource domain can be kept hidden and only the necessary details with regards to the expected control are disclosed by the means of the virtualization (slice). Fig. 6 shows a multi-domain set-up, where wholesale and retail providers trade resource slices in a hierarchy. Each of the green bars represent a slice (resource virtualization) over which the northbound client has exclusive control. Each autonomous player (a UNIFY component and Service Management) can insert a value added bump in the wire services into the service chains requested by their northbound clients.

Fig. 6. UNIFY multi-domain view of virtualization (slice) and control

At the end of the day, resource slices are used to run VNFs as part of service function chaining. Such a 5 service function chain definition clearly goes beyond our resource abstraction, but where resource are assigned to such requests, once the service logic is mapped to VNF types, network constraints can be done in a service agnostic orchestration. In analogy to IP, once the application puts its payload into IP packets, IP routers could not care less what the payload is when routing them to the destination. That is, a 2CPU core 2Gbyte VM request with 10Mbps in/out bandwidth means the same orchestration task for a content cache service as for Deep Packet Inspection (DPI) logic.

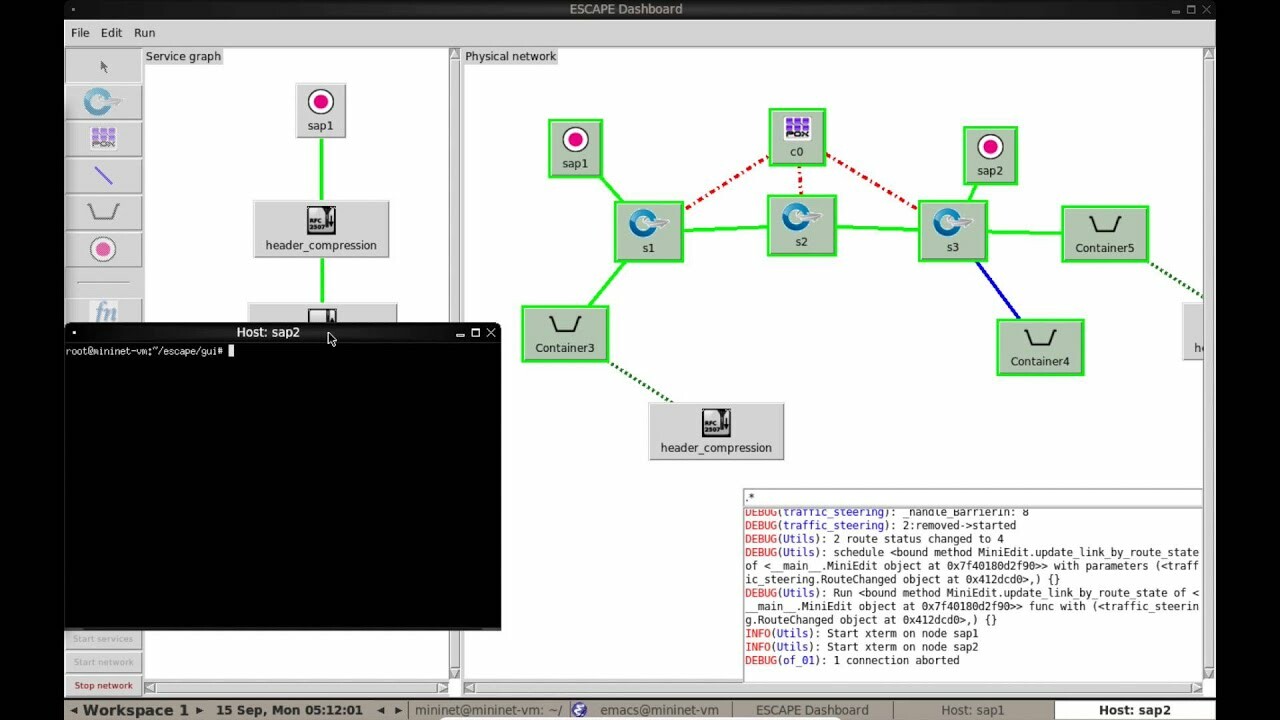

As we have discussed so far, the key to success is in the proper abstraction and control API. The UNIFY project proposed a data model for virtualization (slicing) and control in a paper earlier this year. We ran an integrated proof of concept prototype demonstration at SIGCOMM 2015, with an SDN transport domain, Click compute execution environment and an OpenStack cloud, please see below

Regarding our future plans, the work will continue in the recently launched 5GEx project, part of the EU Horizon2020 program, under the 5G Infrastructure Public Private Partnership (5G-PPP), more on that here. UNIFY’s technical solution currently operates over a static hierarchy structure of domains or technologies, shown in Fig. 7 – “U” denotes the UNIFY control API. In 5GEx, we will look into the business to business reference point – see B2B in Fig. 7 – between UNIFY domains to enable dynamic composition of UNIFY’s control plane hierarchy. This composition process comprises of the negotiation and configuration of the corresponding virtualization (slice) components. Once the virtualizers are in place, the responsibility domain is extended over the resources presented by the provider – see the increased control view of Service Provider A in Fig. 8.

Fig. 7. UNIFY: Unconnected control domains with Business-to-Business interface (5GEx)

Fig. 8. 5GEx: Dynamic composition of UNIFY control plane structure

Finally, key to implementing this vision are two things: the support of the common resource abstraction interface and logically centralized functionality that can perform orchestration over multiple domains – or even across multiple operators – and can make intelligent decisions on the resource assignments and the placement of network functions. An example for hosting this functionality could of course be Ericsson’s Cloud Manager.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.