Cryptography and privacy: protecting private data

You may have experienced this scenario yourself at a dinner party, being asked the question, “What do you work with?” For some, this natural conversation starter might not be that easy to answer. Personally, I often find myself in a similar position to the physicist Richard Feynman who was once asked in an interview, “What is magnetism?” and reflecting on the question’s complexity, took it upon himself to ask why ice is so slippery to illustrate how seemingly simple questions are often difficult to explain.

In recent years, I’ve been working in an area that is also hard to describe for a general audience: cryptography. I generally describe cryptography as a set of technologies that help us protect data. Often the discussion with my table partner goes further into privacy, the need for protection of people’s data and nowadays also would touch GDPR. Sometimes I am tired and give in by leaving the impression that I work with privacy-related matters. But more often than not, I try to explain that cryptography and privacy are different areas, and that I see cryptography as providing us with the tools necessary to make the processing and communication of private data secure.

Therein lies the connection with privacy and protection of people’s data. If my audience shows interest, I even dare to say that in many cases cryptography doesn’t ‘solve’ protection issues but hopefully turns them into more simplified problems, for example, by keeping just the key secret instead of the entire data secret when it is being stored or sent.

Cryptography does not solve privacy problems, but it is a useful tool

But imagine if my table partner turns out to be very interested in this area and asks about the types of technical solutions that address privacy and data ownership. Unfortunately, the evening is late so we cannot go through all tools in the crypto and security box, but I share some ways we are implementing protection. One way is to program our ICT systems to prevent people from getting access to data by enforcing rules: like login procedures and checking what role you have and then authorizing access or the ability to do processing. For many years, this has been the prevalent way of protecting data during use or processing.

But when transmitting data, either in space when talking about communication or in time when talking about storage, encryption has been an extremely helpful tool. But encryption has also become a tool used to improve the security of solutions that protect data when it is processed, especially if we want to use cloud. We will come to that a little further on.

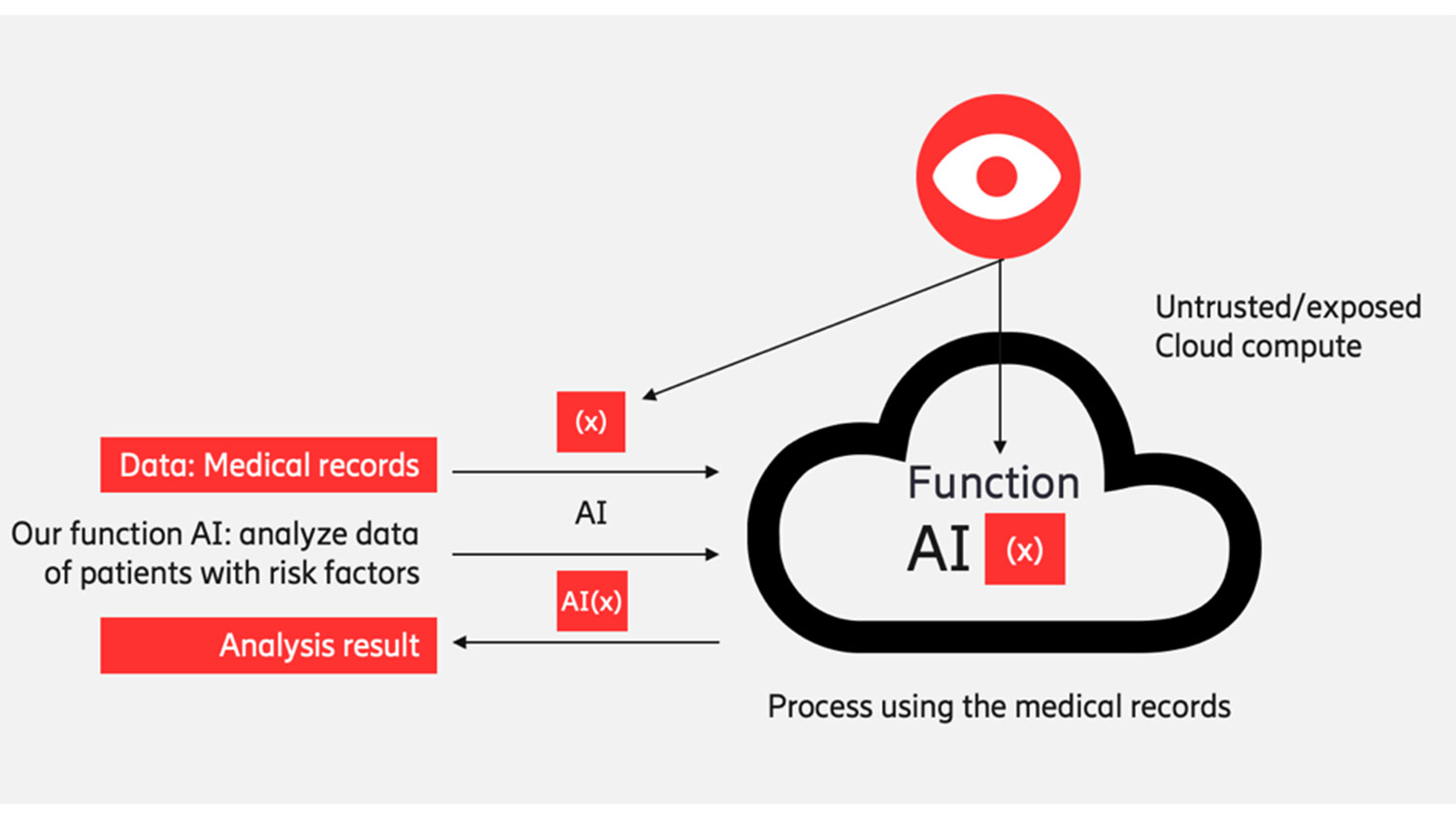

Figure 1: Outsourcing computations – the problem

De-identification and crypto

Often people have questions related to the processing of data in public cloud systems, especially as the data contains privacy-related data in the form of names, credit card numbers, or international mobile subscriber identity (IMSI) numbers, etc. A natural approach is to try and do some form of de-identification of the data. How do we achieve this?

I decide to give some practical examples to my dinner partner. I explain that if the data items are not of any relevance for the processing, we can just remove them or randomize the values. But more often we want to do some statistics and other analysis using the privacy-related items as well. Then a technique we can use is anonymization, or alternatively pseudonymization. One must be aware that this may not address the problem 100 percent. Depending on the data, simple substitution anonymization may leave traces of information, which potentially could be harvested to identify a person. If the transformed data must be recoverable and when transformed must have the same format (for example, like a credit card number or IMSI number) Format Preserving Encryption (FPE) can be used.

Whilst this may sound simple, it isn’t technically and also the only algorithm that National Institute of Standards and Technology (NIST) still approves is FF1 from their SP 800-38g publication. Especially, since the use of that algorithm is guarded by expensive licenses. Hence in practice, one tries to see if anonymization only will do the job. FPE is an example of pseudonymization where the transformed data that may be linked to individuals uses a code, algorithm, or pseudonym.

Claiming data ownership

Here, there’s another interesting and useful property that comes with encryption: data ownership, if you own the key, you can decrypt. Cryptography can also show that you are the legitimate owner of the data. Here we come into the area of secure time stamping, digital ledger and blockchain systems, a topic that will fill yet another conversation. Also, digital watermarking can be considered, but the ‘graveyard of broken watermark schemes’ has quite a large body count.

However, watermarking schemes are in use and often are effective when used when coupled with laws that combine hefty fines if cryptographic is broken. You may say this sounds like Digital Rights Management (DRM) systems and media protection. Indeed, it does. Although we are talking about different areas there are certainly parallels between the systems developed for media protection and those designed to give ownership protection of personal data.

This is when my observant dinner partner remarks that, “to associate data with someone (so that he or she can claim ownership) requires the existence of an identity.” Cryptography is useful in addressing this area by providing solutions for creating secure digital identities, for example SIMs and electronic credit cards, or schemes like OpenID with and without biometrics.

Confidential computing: secure hardware for the good

Going back to our practical example, let’s assume that the data being processed contains sensitive data scattered throughout the documents, so we cannot easily use anonymization or format preserving encryption techniques. One option we have today is the use of confidential compute technologies. With confidential compute enabled hardware in the cloud systems, we can create protected execution environments in which data and code are used in certain processing tasks but where other processes not within the execution environment cannot access the data or even the code. From outside, the protected execution environment, the code and data are encrypted and integrity protected.

Today, we have a few technologies for confidential computing that are especially useful: Intel SGX, AMD SEV and IBM Pervasive encryption. Arm, the company behind the processor technology used in almost all devices, is bringing a solution for their type of processors. The potential of confidential compute technologies is enormous, and we see today these solutions are fielded by players like Microsoft, Amazon and Google in their cloud systems.

Homomorphic encryption: the Holy Grail of confidential computing

While the hardware based confidential-computing technologies are amazing and very helpful for overcoming concerns related to performing the processing of sensitive data (but also sensitive code) in the cloud, they also require a level of dependency on special hardware and trust in the hardware vendor.

So, the next question comes almost automatically: “Can we do the desired processing on encrypted data, compute the encrypted result and then decrypt the result in our own secure environment?” That would be the holy grail for secure computation in the cloud. Thirty years ago, we could only do this for certain computation tasks, such as the multiplication of encrypted data or the addition of encrypted data, but we couldn’t freely combine them or do other operations together.

Figure 3: Using confidential computing for secure processing of sensitive data in the cloud.

For many years, the cryptographic community tried to solve this problem by looking into (fully) homomorphic encryption – we all waited with anticipation. But it was not until 2009 that Greg Gentry from IBM gave the first proof that fully homomorphic encryption was feasible. I consider this the most important discovery in cryptography in the last two decades. Unfortunately, the first solutions by Gentry were extremely complex and were not able to be practically applied in commercial situations. This remained the case for many years following his initial proof, but in the last five years progress has taken us so much further. We can now see commercial solutions offering homomorphic encryptions together with AI processing. Still, there is a large performance penalty in the form of much slower processing, but if protection is important then, say, a performance penalty of a factor of 100 to 1000 times slower could be acceptable. Furthermore, we have now come to the point where it becomes interesting to look what hardware acceleration can do to for homomorphic encryption, see Intel & Microsoft in DPRIVE.

Private data and software in the cloud – yes?

Isn’t this all truly exciting? I certainly believe so. By combining several techniques we are getting closer to having a good set of Privacy Enhancing Technologies (PETs). These will allow us to do computation in the public cloud for all sorts of sensitive data, as well as protect AI and ML tools. In the future, de-identification might be considered too unreliable for unstructured data and confidential computing and homomorphic computation solutions are easier to apply as generic tools - even if they are slower. A lot of interesting things are happening now in the area of cryptography and privacy. Before I depart for home, my final words to my table partner are, “I’m becoming more confident that in many cases we will have good technologies to protect personal information/data when we process it in the cloud. And – yes - cryptography is useful here."

Find out more

Privacy is much more then crypto. To get an overview of many aspects of and around privacy-preserving technologies see chapters in this book.

Get a peek into the more regulatory view of the EU via the Article 29 of the Data Protection Working Party

If you are interested to read more about PETs you can also check this ENISA document Readiness Analysis for the Adoption and Evolution of Privacy Enhancing Technologies.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.