Why side-channel analysis attacks are increasing and how to stop them

- Attacks based on so-called side-channel analysis are a growing problem.

- An attacker can extract secrets by measuring side-channel leakage, such as power consumption.

- We believe this problem is best solved by letting each actor, from creator of source code to hardware designer, contribute with their respective expertise.

Side-channel analysis (SCA) – what it is and why it matters

Side-channel analysis (SCA) is an umbrella term for several different methods of exploiting unintentional information leakage from a process (such as a computer program or application) in a device. In this blog post, we are going to take a closer look at physical side-channels, the current state of the art, what the future holds for countermeasures against SCA, and how SCA can also be used for good.

Every device, be it a constrained Internet-of-Things (IoT) device, a smart phone, a laptop, or a supercomputer with thousands of CPU cores, leaks some sort of side-channel information for every instruction, piece of data processed, or input to the device. The actual side-channel may take many different forms. It may be

- Physical properties, such as power consumption or electromagnetic emissions

- Logical properties such as memory access patterns or processing time.

An attacker may be able to reveal both the operations performed and data processed by the device through learning how the variance in side-channel emissions correlates to processes within the device. Secret data such as a cryptographic key can be extracted by observing and categorizing data-dependent side-channel emissions, that is, where the content of the secret data directly alters the side-channel leakage.

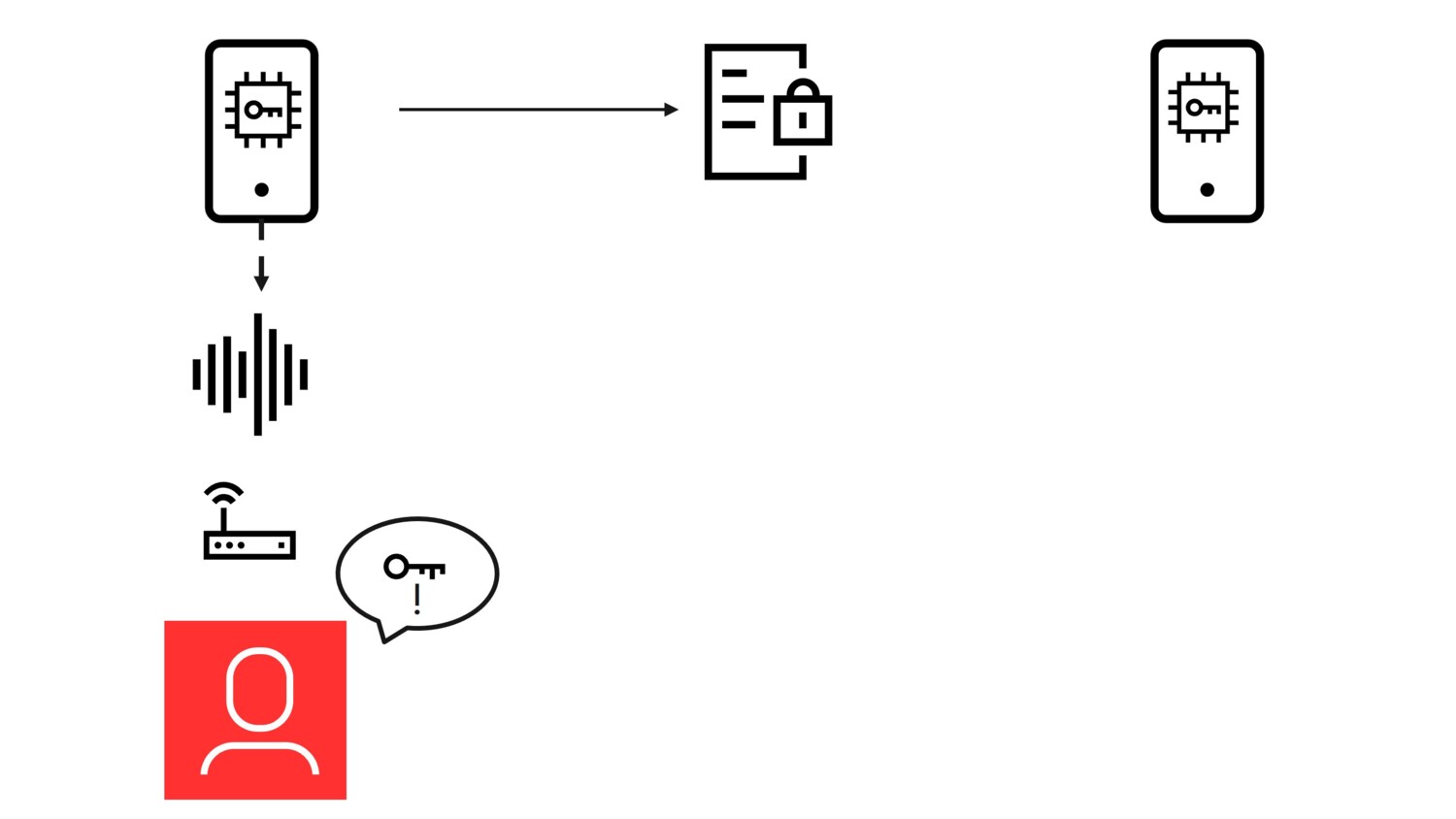

Figure 1 - The concept of side-channels analysis.

Figure 1 shows the steps of the concept:

- A device encrypts information with a cryptographic key and sends it to another device.

- An attacker observing this information cannot extract the key.

- However, an eavesdropper observing the side-channel leakage from the encryption process may extract the cryptographic key.

When performing an SCA attack, the attacker first collects traces (a collection of side-channel measurements over time while running a known process), often with targeted sensitive data that is constant, while known input or output data may vary between traces. It is common to use a divide-and-conquer approach where an attacker guesses small parts of the secret at a time and can determine which guess is most probable to be correct. The traces form a curve which represents certain side-channel information, such as power consumption, over time.

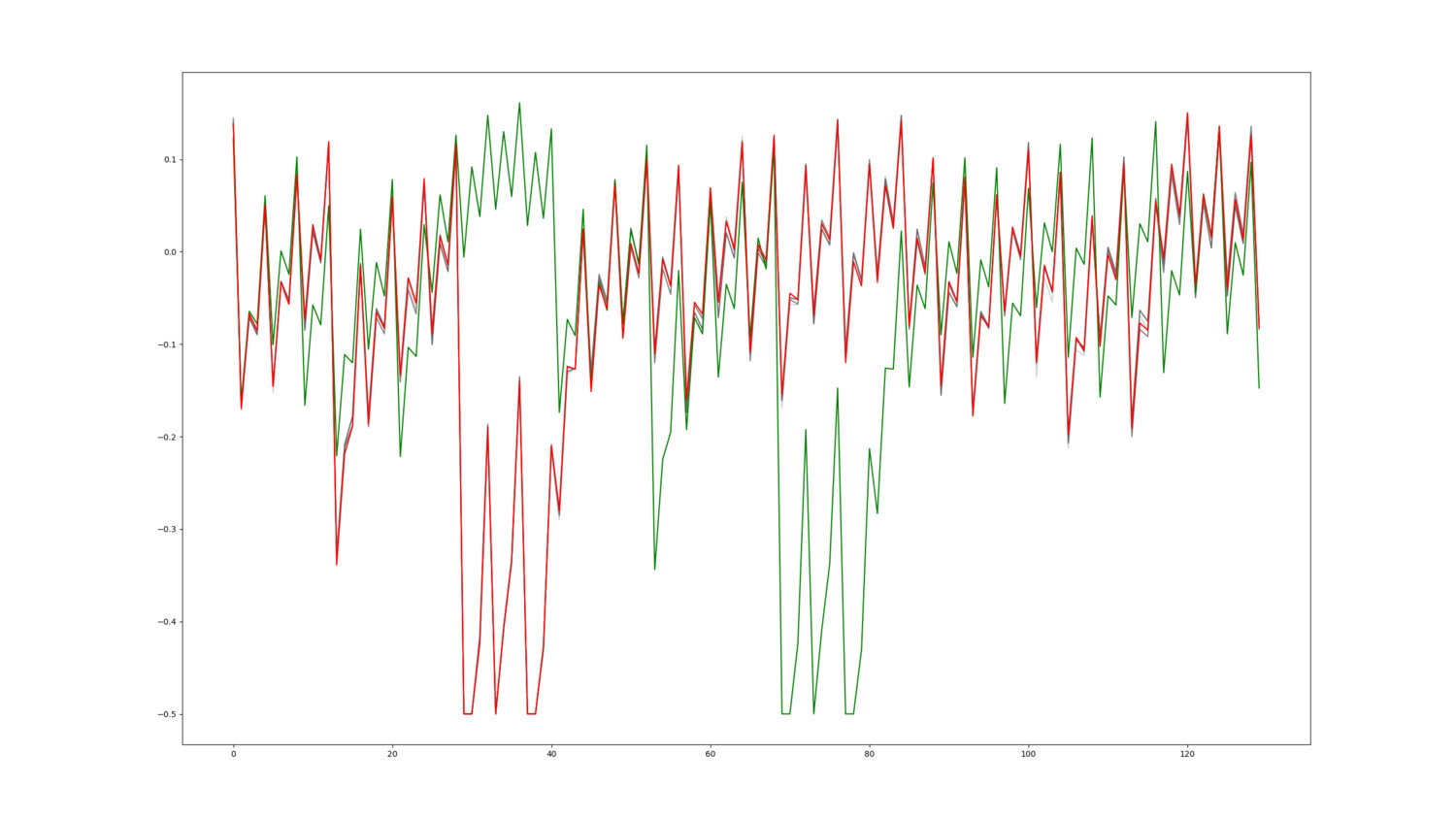

Figure 2 – Example of collected traces

Figure 2 shows several traces measuring the power consumption in a device, where the curve shows increase and decrease in voltage over time, compared to the static power required to run the device.

The traces are collected from a device running the same process each time but receiving different input. The red and grey traces are almost indistinguishable and indicate the device performing the same operations when receiving this input. The green trace, on the other hand, indicates the device performing different operations. That is, the input the device received when the green trace was measured, made the device behave differently.

By learning to understand the shapes in the traces and how they relate to the data being processed, the attacker can make very educated guesses on portions of sensitive data at a specific point in time, for example when a certain bit in the sensitive data is a one or a zero.

Machine Learning – a game changer for SCA

SCA is not a new discipline, it’s been practiced since the late 90s, but has grown more powerful during recent years. One prominent reason for this development is machine learning (ML). The simplicity of training powerful models able to learn the correlation between side-channel leakage and data has increased both the number and success rate of attacks. While it might not seem obvious at first, SCA has a lot in common with image classification, a field in which machine learning algorithms are very successful. Certain aspects, such as being able to use multiple traces for the same sensitive data, makes SCA even more suitable for ML classification. Hence, the ML-enhanced SCA does not have to build algorithms from scratch but can benefit from algorithms prominent in identifying shapes and patterns in images.

Many of the attacks exploiting physical side-channel attacks aim to break crypto algorithms. This is no surprise since most, if not all, devices are dependent on crypto algorithms to securely transfer and store any confidential data. If the key used to encrypt this data can be extracted, any data encrypted using this key can be exposed.

Before we continue, it should be mentioned that ML-enhanced SCA is not only used for malicious purposes – it is also possible to use it to perform external health checks on devices. By having a monitor which learns the expected instruction patterns and their corresponding side-channel emissions, it is possible to establish a baseline which can be used to detect anomalies. If a device is infected by malware, the side-channel emissions change can be detected by an external monitor. Since the monitor is not a part of the device, the malware cannot affect the monitor directly, and since the intention of the attack often is to make the device behave differently, it is very difficult for an attacker to remain undetected. SCA monitoring can also be used to detect non-malicious malfunctions within a device.

We will come back to using SCA for good later and now look in more detail at using SCA for malevolent purposes.

A growing attack vector

While classic SCA requires physical access to the device under attack, new approaches enable the attacker to also remotely perform SCA. In the paper "Far field EM side-channel attack on AES using deep learning" (Wang et al.), a machine-learning based attack was able to extract a cryptographic key from up to fifteen meters by utilizing an antenna registering far-field emissions, where the processing within the device affected the signal strength. In another (Lipp et al., PLATYPUS: Software-based power side-channel attacks on x86, 2021), power management capabilities within the device were exploited, via unprivileged malicious software, to extract the side-channel, enabling the attacker to be on the other side of the earth.

In the last five years, we have seen an explosion of transient and microarchitectural side-channel attacks that also are exploitable via software. This shows the magnitude of the problem and that physical protection such as secure facilities with gates and guards are not sufficient to protect against SCA. Needless to say, attacks using SCA and countermeasures against them are hot topics.

The problem with SCA is amplified in a future scenario where computational resources are distributed, and where companies and maybe even users may rent out unused computational power for economic reimbursement. From an economic and environmental perspective, this is very positive as it enables idle computing power to be utilized upon demand and for users to utilize adjacent computing resources to offload computations. From a security perspective, however, it reduces the control the user has over where the data is processed and opens for new attack vectors, especially if the hardware owner decides to eavesdrop.

A device group that can potentially become even more important in this future scenario is reconfigurable hardware, which includes Field Programmable Gate Arrays (FPGA). Briefly put, these are devices comprising programmable logic which can be configured freely to act for example as an accelerator or processing pipeline which the user needs.

Our work – side-channel protections in an evolving threat landscape

Together with KTH Royal Institute of Technology, Stockholm, Sweden, we run the research project “SURE: Securing Reconfigurable Hardware in the Era of AI”, financed by Vinnova, the Swedish innovation agency. The research focus is current and future possibilities and threats caused by analysis of physical side-channels.

In this project, we research solutions for tomorrow’s side-channel leakage problems, a tomorrow where we believe that off-loading of processing will be commonplace. Process off-loading often means that someone else owns the hardware running your software. While a lot of research is put into protecting the data from an eavesdropping hardware owner by using encryption and protected enclaves, less is said about the side-channels. If the hardware owner is able to monitor the physical side-channel, which is a reasonable assumption, the future requires a holistic approach for security which includes the protection against unwanted side-channel leakage.

One key aspect of being able to extract information from side-channel leakage is to have sufficient Signal-to-Noise Ratio. In other words, how much of the observed signal relates to the process and/or data compared to noise generated by the device, for example, by other processes running simultaneously. There are two different strategies to utilize noise to create an unfriendly environment for SCA.

- Drown the signal entirely in noise, meaning the noise is so prominent that the data-dependent signal is lost in the randomness.

- Use algorithmic noise. Algorithmic noise can be seen as noise which is generated with input from a state or a secret and is deterministic, meaning that a specific secret will produce specific noise. Algorithmic noise generally requires less relative power than random noise to achieve the same level of protection. An attacker that is able to measure the same side-channel leakage for the same secret repetitively can eventually filter out moderate random noise, while algorithmic noise is much more difficult to filter out. We consider algorithmic noise to be an important block for protecting against side-channel attacks.

However, knowing where to apply the countermeasures against side-channel leakage is currently a task which in most cases falls on the software developer. While there exist some best-practices against side-channel leakage, we believe that the software developer should not need to bear the entire burden of ensuring their secrets are not exposed during execution of the program. Together with KTH we are researching solutions to instead let each party involved in the process contribute with its own expertise.

Countermeasures – from sole responsibility to collaboration

In a scenario where a user rents hardware to offload computations for example, the user generally does not want to trust the hardware owner with the data. Encryption and secure enclave solutions ensure this to a certain extent, but they do not prevent the hardware owner observing the side-channel leakage. We are researching the possibility of software-activated countermeasures being built into the device’s processing units, such as CPUs. If certain parts within a software binary handles sensitive data, the programmer could indicate which parts should be protected against side-channel leakage and the device would automatically add algorithmic noise when these instructions are executed. One method of adding noise is to activate other execution elements simultaneously, operating on data which is not correlated to the sensitive data. By using algorithmic noise, it will be very difficult for an attacker to separate the noise from the side-channel emissions originating from the sensitive data being processed.

To reduce the impact on the power consumption and throughput for other processes, this countermeasure should only be activated when necessary. We consider the software developer the ideal candidate to identify the sections in the software which need to be protected. They may not have the expertise in creating side-channel countermeasures, but on the other hand are aware of where and when the sensitive data is handled. By moving the responsibility of deploying the actual countermeasures to the hardware designer / vendor, who understands the leakage of the hardware components, we create the optimal situation where each actor in the process contributes with its own expertise.

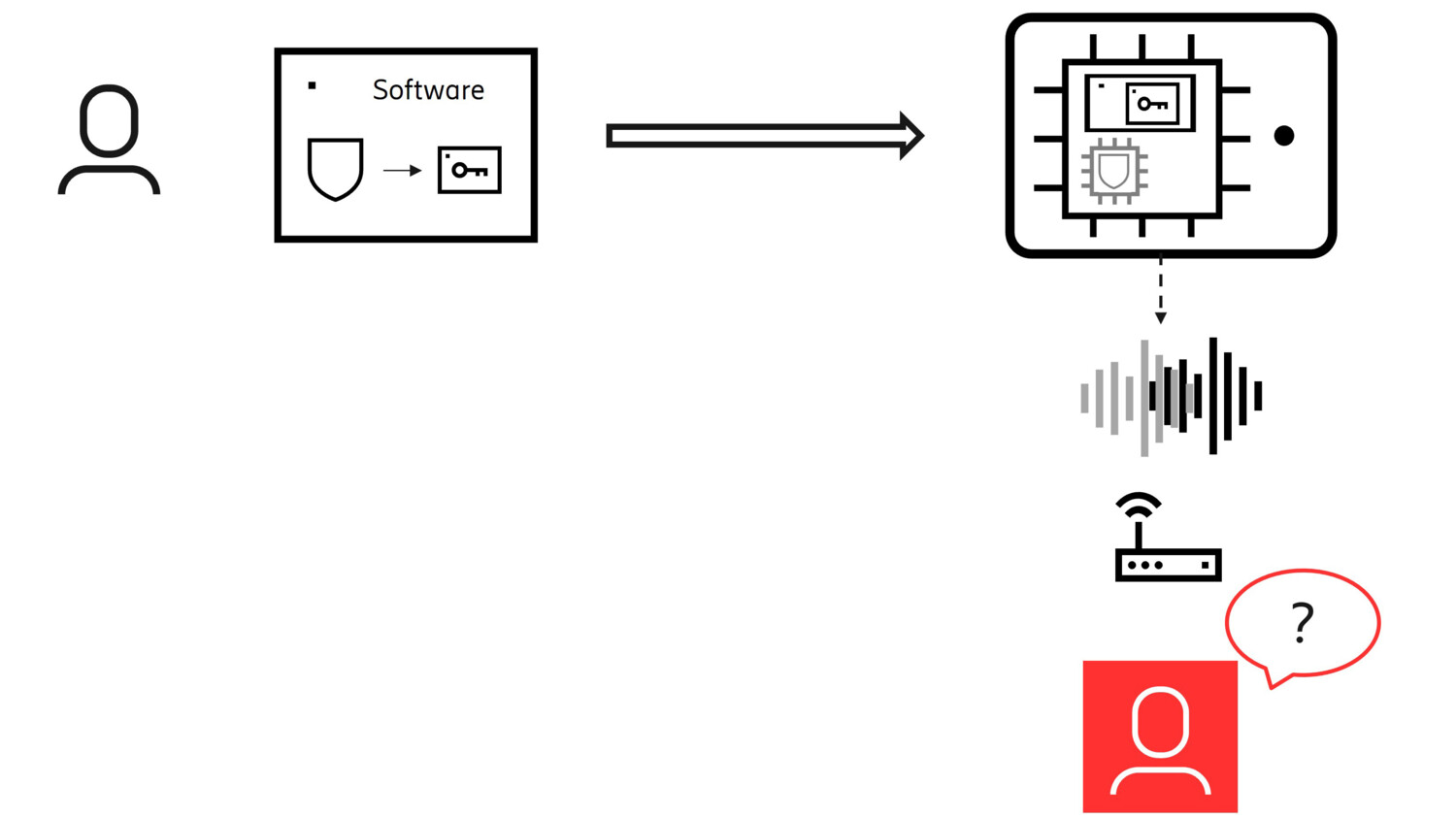

Short summary of the concept is illustrated below.

Figure 3 - The concept of collaborative side-channel countermeasures.

As shown in Figure 3, the software developer marks sensitive sections in the code.

When executing the software binary on hardware, a countermeasure circuit (marked in grey) in the hardware protects sensitive sections by adding additional noise.

The eavesdropper cannot differentiate between the real side-channel leakage and the additional noise.

As mentioned previously, FPGAs are likely to become important in a future compute offload scenario. In such a scenario, the hardware design leakage is not known beforehand. Therefore, it is important that the programmer can protect the FPGA configurations, also known as bitstreams, from being susceptible to SCA. We therefore suggest an iterative process where the tool chain measures the theoretical leakage of the FPGA configuration while it is being built from code to a hardware layout. Just as in our software example above, the programmer of the bitstream is the best candidate to identify which signals and modules in the configuration are sensitive and should be protected. And as testing for side-channel leakage becomes more computationally expensive as the building process continues, it often becomes infeasible to test entire designs for side-channel leakage at later stages of the process. By identifying sensitive signals and modules in need of such testing while letting less sensitive modules skip later testing stages, the building process can be sped up without sacrificing security.

While the creator of the bitstream knows what is sensitive, another party, namely the tool chain provider, may know how to best protect sensitive constructions within the bitstream. So, by once again letting different roles contribute with their strengths, we can protect the end result against side-channel leakage. This process can be used in combination with other protection methods suggested for reconfigurable logic, such as FPGA enclaves, where the bitstream is never disclosed to the hardware owner by utilizing the implicit mutual trust which both the user and hardware owner have for the hardware vendor.

Further strengthening the countermeasures

While we believe moving the responsibility of applying countermeasures against side-channel analysis and letting each party contribute with their expertise is a very important step, there are two factors which we consider important to strengthen the actual countermeasures implemented in the above-described solutions.

- First, we see that machine learning may play an important part in making the countermeasures robust. Since many of the side-channel attacks today utilize machine learning, we see a possibility of utilizing machine learning also for the defense. By identifying where attacking algorithms are finding leakage, we can distort the side-channel leakage in the precise right moments rather than using an “always protect” approach.

- Second, an attacker, that is able to acquire a device equal to the real target, can build a model of how the side-channel leakage correlates with the processes or data within that device type. By doing so, the attacker lowers the complexity of attacking the target device significantly. Hence, much can be gained by making the countermeasures device-unique. By utilizing a secret device-unique parameter, such as a random number written into a one-time programmable memory or an output from a Physically Unclonable Function, the side-channel leakage produced may look entirely different on two otherwise equal devices.

SCA for good

And as promised, we will also return to how to use side-channels for good and especially, how to secure solutions where side-channel monitoring is desired. As we have described earlier, in some scenarios it is valuable to be able to externally determine the health and state of the device by observing the processes being performed in it. A monitor may be deployed to perform this task. However, this health and state is available to anyone who is monitoring the side-channel, which might not be desirable. For this purpose, we have developed a solution where we add algorithmic noise based on a shared secret and a number-used-once (nonce) between the device and the monitor. As the authorized monitor knows the secret and nonce, it can predict the algorithmic noise and filter it out. This makes it possible to disguise the state of the device for any unauthorized monitors while still enabling an authorized monitor to perform health checks.

By developing side-channel solutions for future security challenges, at Ericsson we are striving to have solutions for a new threat landscape ready before it emerges.

Learn more

- For more information regarding security and reconfigurable hardware, read our paper Secure acceleration on cloud-based FPGAs – FPGA enclaves

- For more information on side-channels and the current state of the art, take a look at the papers from Elena Dubrova and her team at KTH Royal Institute of Technology.

- Learn more about our research on Future network security

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.