Unlocking CPaaS and network APIs for AI agents: Vonage and MCP

- English

- 简体中文

- Ericsson Research and Vonage show how the model context protocol (MCP) connects AI agents with CPaaS and 5G APIs, enabling smarter, agent-driven communications.

- Learn more in this blog post.

AI agents are changing the way we build and interact with software. Instead of coding integrations line by line or digging through API docs, you can simply ask an agent to “send a message” or “find my device,” and it will figure out how to get the job done by making the right API calls in the background.

For this to work, agents need a standard way to discover and interact with APIs. That’s where the Model Context Protocol (MCP) comes in. MCP is a new open standard that bridges AI systems with external services, giving them a consistent and secure way to use tools dynamically.

At Ericsson Research, together with Vonage, we’ve been experimenting with how MCP can make both network APIs (like Device Location and SIM Swap) and CPaaS APIs (like SMS, Voice, and Verify) available to AI agents. This means agents can orchestrate communication and network functions directly, creating practical paths for CSPs to monetize 5G.

Making APIs agent-ready: AI agents and MCP

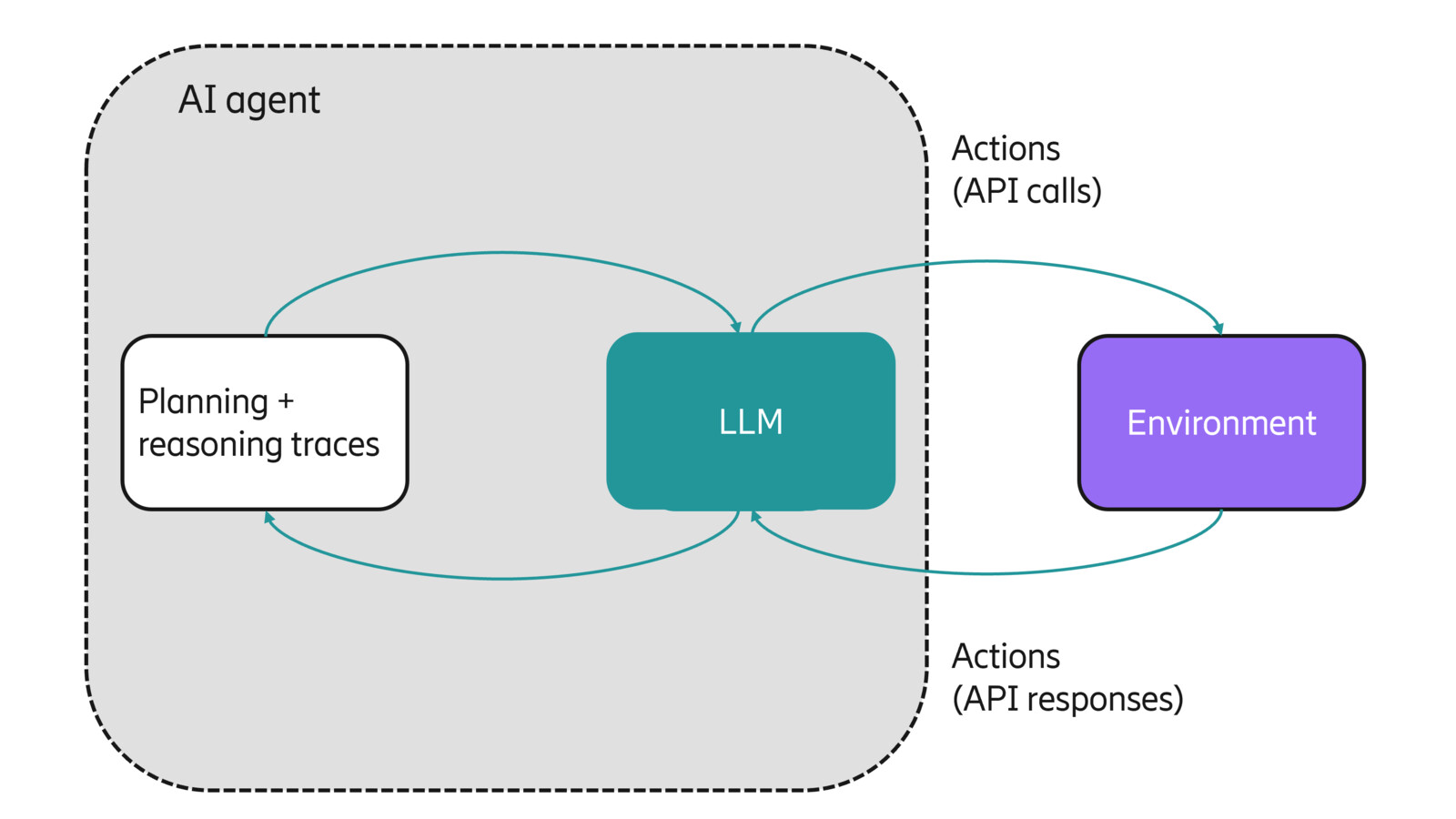

An agent is an LLM connected to external tools, running them in a bounded loop where outputs are fed back into the model. Its purpose is to achieve a defined goal, with the loop ending once the goal (or stopping condition) is reached. In practice, this means the model can call functions that wrap APIs, look at the result, and then decide the next action.

One common approach is the ReAct framework (Yao et al., 2022), where an agent solves a task through multiple rounds of reasoning and action. The reasoning step decides what tool to use, and the action step executes it, with the loop continuing until the task is complete.

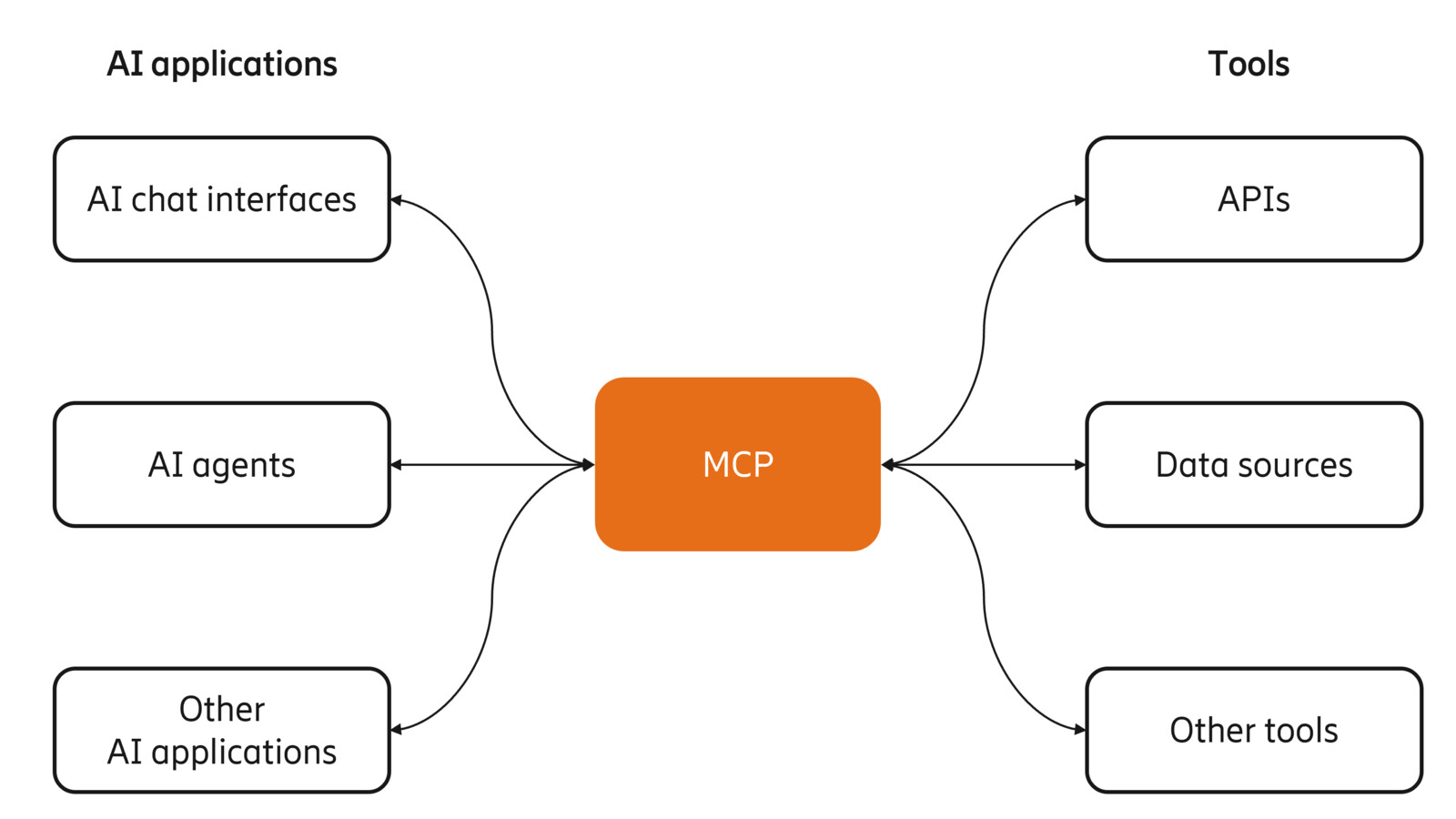

But agents need to access a large variety of tools, databases, filesystems and many other interfaces that were not designed for AI agents to consume. As a result, a widely popular standard for exposing data to LLMs was created, the Model Context Protocol (MCP).

You can think of MCP as an extension for your APIs, designed to be consumed by LLMs. With MCP, tools are exposed in a way that AI agents can understand their purpose dynamically without needing custom prompt engineering, manual integration, or hardcoded function schemas.

It allows exposing capabilities (for example, database queries, file access, function calls, and API calls) as "tools" with defined input/output schemas. AI agents can then dynamically and with little or no prior knowledge pick which tool to use, invoke it, get a result, and integrate that result into their ongoing reasoning loop.

Agents can also switch between tools from different MCP servers as needed, making them more robust. For example, given a user, the agent may use a database to find the contact information and then attempt to send an SMS, but if that fails, it can automatically switch to another message provider or to email if the right tool connectors are available. This intelligent orchestration is what makes MCP more than just a wrapper for APIs; it’s a framework for building adaptive, AI-native workflows.

Turning APIs into agent tools

As part of this collaboration, Ericsson Research built a proof-of-concept MCP server that exposed Vonage’s APIs as tools. These included SMS, Number Insight, Verify, and Device Location, along with utility tools like contact lookup and web search.

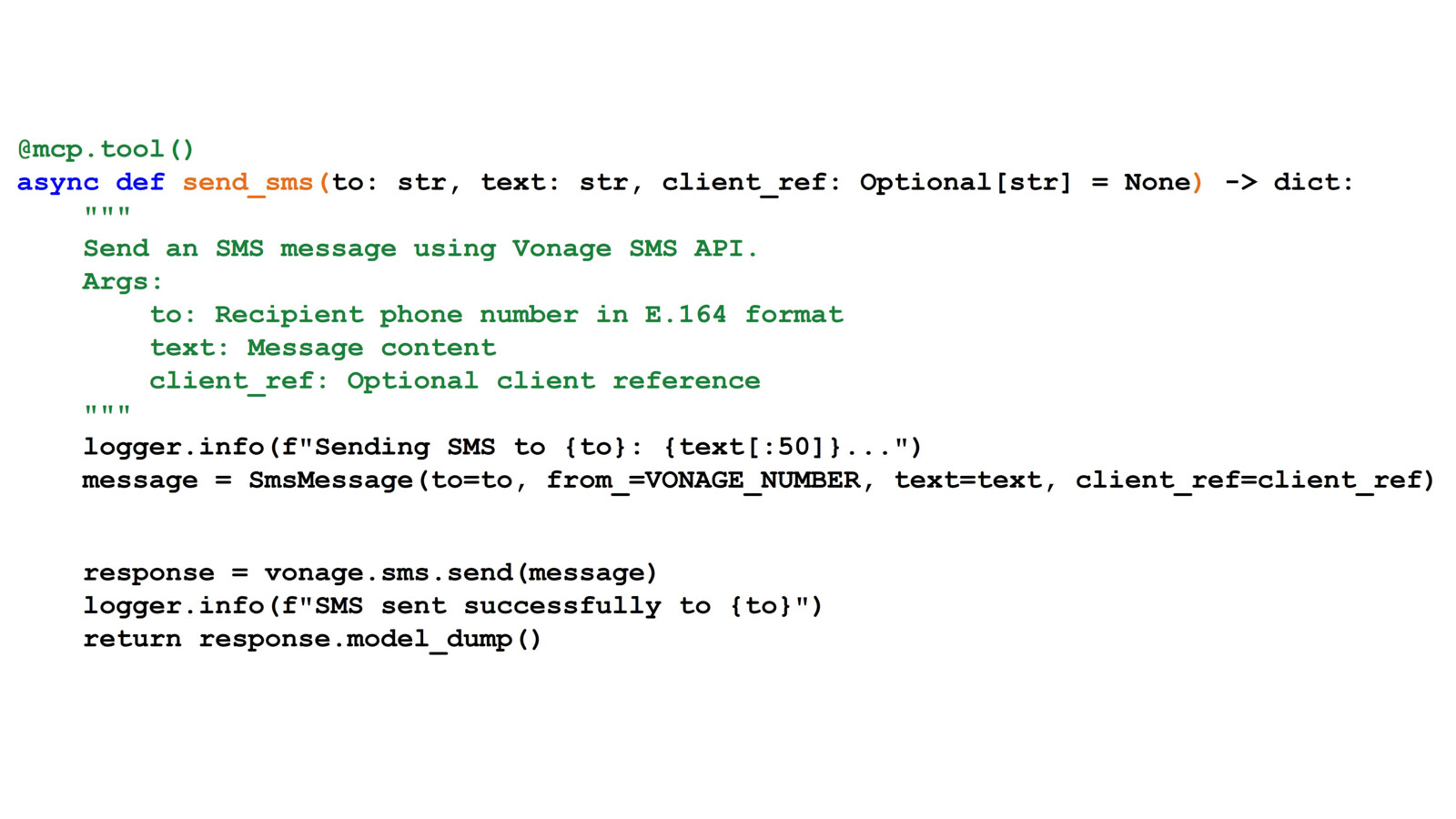

The idea is simple: instead of coding each interaction manually, you register an API call as a tool in the MCP server. For instance, we wrapped Vonage’s Python SDK SMS call as a tool named send_sms. From the agent’s perspective, it just sees “send_sms” available. When it decides to use it, the server executes the underlying Vonage API request and returns the result.

This is what it looks like:

This makes things much simpler for the agent. It doesn’t need to know the API schema or logic; it just knows that “send_sms” is a way to send a message.

We also wanted to show how flexible this approach could be. In one demo (see the video above), when a user asked about device location, the agent didn’t just return raw coordinates. It automatically generated a map snippet using the location data, creating a richer output. This points toward new kinds of interfaces, where agents can combine different tools and present results in ways that feel natural for the context.

The real power comes when agents chain tools together. Agents can adapt workflows depending on context - choosing the right combination of APIs at runtime rather than following a hardcoded path. The demo also hints at a significant shift in how we might build communication services and user experiences in the future.

Try MCP today, stay up to date

Vonage’s goal has always been to make communications easier for developers to use, wherever they need them. With AI agents becoming more common, it makes sense for APIs like Voice, Messaging, Video or Network APIs, to be available directly inside those environments.

For Vonage, MCP lowers the barrier for developers by making CPaaS APIs discoverable and usable without extra integration work. For Ericsson, it shows how network APIs can be made accessible to AI systems, opening a path for CSPs to monetize 5G in new ways. Together, it’s about bringing enterprise APIs and telco-grade APIs into the same agent ecosystem.

To help developers start experimenting, Vonage has launched its first MCP server: the Vonage Documentation Server, which lets agents search and retrieve information directly from the official docs.

This will soon be followed by a Tooling MCP Server, which will go further by exposing live API functions. Until then, you can explore the possibilities using community-hosted servers like the Telephony MCP Server and read the blog post by Atique Khan.

Read more

- To get notified of the launch, join the Vonage Community Slack or sign up to the Vonage Developer Newsletter.

- To follow the latest from Ericsson Research, keep an eye on the Ericsson Technology Review for updates.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.