HTTPS everywhere

In this blog post we describe the reasons behind the recent explosion of encrypted HTTPS traffic, why this trend is likely to continue, and the new mechanisms that service and network provides can use to cope with this challenge.

Recent revelations of pervasive surveillance have significantly changed the public’s perception about security on the Internet, especially with respect to end user privacy. This trend complements the increased interest from online service providers to protect their service delivery processes. Just a few years ago, unencrypted web traffic was the general rule, with HTTPS an exception, only used for services like Internet banking, online shopping, or transmission of passwords. Recent measurements by Sandvine show that HTTPS now accounts for 60 % of the web traffic in many networks and the industry is quickly approaching a point where HTTPS is expected and mandated everywhere. Many sites are already supporting HTTPS, just waiting to turn it on by default.

The move to HTTPS means that some existing network practices, for example HTTP caching and content classification, cease to work. It also puts a lot of pressure on service providers, who have to handle traffic encryption, certificates, and key handling, as well as make mechanisms such as load balancing and advertisement work with HTTPS. While HTTPS makes the delivery process more robust and improves user security and privacy, more processing resources are required and traffic previously handled by transparent inline caches must now be processed by the service provider.

HTTPS Everywhere

In HTTPS, HTTP is sent over a security protocol, for instance TLS or QUIC (a new encrypted transport protocol from Google that runs over UDP), so that a third party cannot read, alter, delete, insert, or replay data in any way. The HTTP client is assured it’s speaking to an HTTP server sanctioned by the owner, the origin server, but HTTPS does not always provide end-to-end security. A real-world example recently discussed in the IETF had eight distinct connection hops from the node terminating TLS to the origin server.

Use of the https:// resource identifier also triggers secure handling in the User Agent (UA), so that https resources are not mixed with non-https resources. This means that in order to use HTTPS, a service provider has to use HTTPS for all content, including advertisements fetched from third parties.

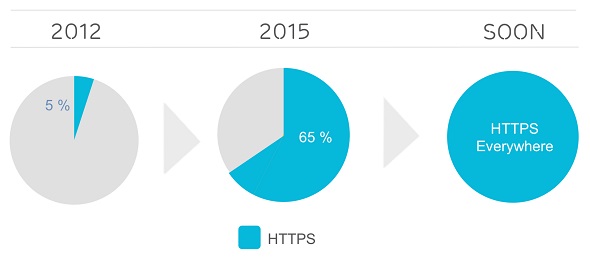

Evolution of HTTPS volume share in mobile networks.

As shown above, the trend towards HTTPS everywhere that started in 2012 has continued incessantly. The amount of HTTPS is higher in mobile networks than in fixed networks because of the high use of apps. While important drivers in standardization are security and privacy, industry usage is mostly driven by a desire to secure the delivery end-to-end, both to protect ownership of valuable analytics data, but also to protect against problems caused by network intermediaries such as ad injectors and application layer firewalls. There are very strong indications that the increase will continue, as suggested by the following industry trends:

- Google Search now includes HTTPS support as a ranking signal in its PageRank algorithm and the Google Chrome browser will soon start to show warnings for non-HTTPS.

- Apple has announced that they in the future will require HTTPS for iOS and tvOS applications.

- While the HTTP/2 standard allows HTTP/2 without TLS, all major browser vendors (Google, Mozilla, Apple, and Microsoft, and Opera) only support protected HTTP/2, i.e. HTTPS.

- The Let’s Encrypt program, driven by EFF and Mozilla, has launched publically and provides free HTTPS certificates. This removes one of the obstacles for smaller sites to adopt HTTPS.

- The World Wide Web consortium, W3C, is working on “Secure Contexts”, with the intention that important features such as Encrypted Media Extensions (EME) and Full screen will only be accessible with HTTPS.

A major impact of HTTPS is the need for server certificates. Without standardized interfaces, people have even been flying between continents just to securely provision certificates and private keys! The industry has therefore initiated standardization of interfaces, making certificate provisioning easier. One such project is ACME, which standardizes the interface between the HTTP server and the certification authority. Another project is LURK which goes one step further, removing the need for private keys in the HTTP server, thereby increasing security and flexibility, while decreasing the cost and trust needed in edge servers.

Evolution of the HTTPS Content Delivery Stack

To support content delivery to as many users as possible, it is essential to support a broad range of platforms, including iOS, Android, smart TVs, and gaming consoles, all controlled by other vendors. While the browser is not the most used platform, web technologies are the future for content delivery on all platforms. The reliance on platforms from other vendors means that the content provider does not have complete control over the client application, and has to use the application interfaces of each platform. Using web technologies makes this easier and ensures a high level of security and privacy for the end user.

The web platform is transforming rapidly and the trend towards HTTPS is just one component of the ongoing evolution of the content delivery stack. To enable quick deployment and experimentation, on the client side, the industry is moving towards user space-based transport such as QUIC. Content providers and CDNs are also migrating to HTTP/2 [hyperlink to 12] where the de facto standard is to mandate TLS or QUIC. The future content stack is HTTP/2 over QUIC or TCP/TLS, combined with security at higher layers. The use of HTTPS does not remove the need for security at the application layer, like (Digital Rights Management) DRM, as the technologies accomplish completely different things and complement each other. While the overhead of cryptographic processing is negligible in many use cases, the impacts on video streaming servers can be significant.

What’s next?

The Internet delivery platform is transforming more quickly than ever, with a focus on increased efficiency, security, and privacy. HTTPS everywhere is soon upon us, and further steps lie ahead; even with HTTPS, many protocols and APIs reveal a significant amount of private information. IP addresses are not only identifiers, they also be bear location information, TLS metadata can be used to identify Tor users, and many new APIs can, in subtle ways, be used to uniquely identify endpoints. DNS is another focus area: DNS Security Extensions (DNSSEC)" and "DNS PRIVate Exchange (DPRIVE) provide integrity and confidentiality against third parties, however, they do not protect against the rogue DNS service provider. The next step in the technology evolution is to update such old and information leaking mechanisms, enabling an Internet infrastructure that can provide a secure and efficient content delivery that gives the end user controls on whom to trust.

As highlighted in our earlier blog post An Ericsson Research view on Internet Security, Ericsson is actively engaged in the Web technology community including IETF to ensure that high performance and cost efficient delegation of content can be combined with content provider control and end-user privacy.

One Ericsson driven mechanism is the blind cache solution, which enables content providers to leverage deeply distributed edge caches while providing business opportunities and network management opportunities for network providers. Please read more about this in our freshly published Ericsson Technology Review article: Blind Cache in an all encrypted web.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.