Autonomous ships – Learning to sail in clouds

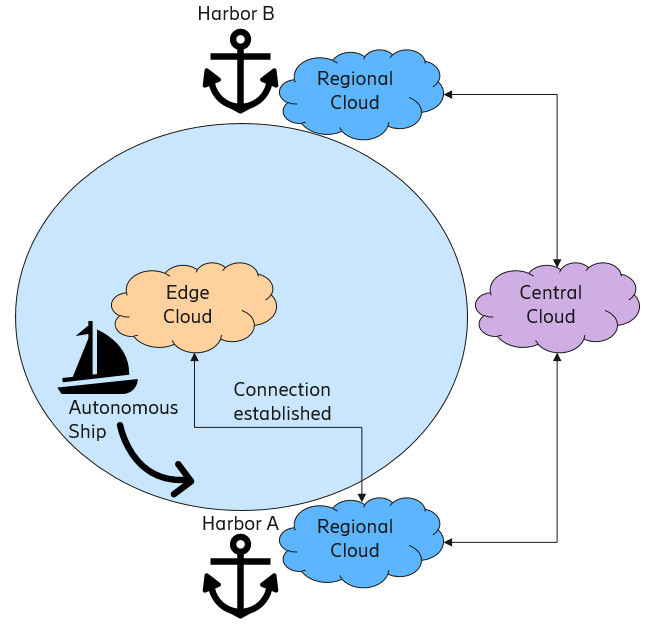

Fig 1: A simplified overview how an autonomous ship sails between two harbors

The project is part of a national Finnish research and innovation program called Design4Value (D4V). Ericsson participates together with other partners in the DIMECC ecosystem.

In the project we focus on autonomous ships that sail independently between multiple harbors. The autonomous ships take advantage of edge computing in order to collect sensor data, fuse data from sensors, utilize control algorithms and machine learning, among many other things. Since the ships use edge computation in processing, we deploy edge clouds for each ship.

A distributed cloud is composed of multiple inter-connected clouds, such as central, regional and edge clouds. The last one, edge cloud, usually has the least amount of processing and other resources but has the lowest latency due to its close proximity to the physical assets. In the case of an autonomous ship, the edge cloud is located aboard the ship itself and, thus, its resources are always available even if the ship loses all connectivity at open sea. When the ship approaches a harbor, it can connect via cellular networks and harness resources from a regional or central cloud for more demanding operations, such as machine learning. In the same way, the ship is disconnected from the cloud environment when it sails outside of the harbor area and then it relies solely on edge computing.

Control algorithm

We have built a miniature version of an autonomous ship operating with two main controllers when sailing: proportional-integral-derivative (PID) and servo controllers. The PID controller is responsible for the control of the movement and the maintenance of the desired course. The servo controller stabilizes the position of the ship by controlling the rudder. In the control algorithms, data from ultrasonic sensors is used to support pathfinding.

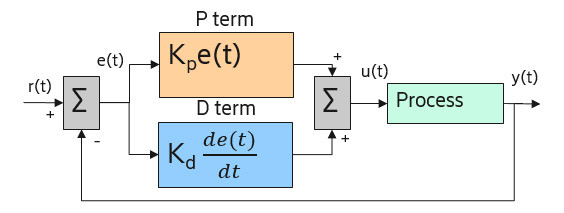

Fig 2: A block diagram of implemented PD controller

We mainly use the (P)roportional and the D(erivative) terms of the PID controller. These terms are responsible for tuning the movement of the ship in such a way that the ship maintains a predefined distance from the shore, avoids collisions and does not drift away from the maritime route.

Decentralized machine learning

In machine learning, a piece of software extracts additional (hidden) information from data that is fed into the system. In this project, the main goal of machine learning is to improve the overall performance of an autonomous ship. Since the edge cloud has a limited amount of available computational resources, we introduce machine learning agents which are aware of their deployment environment, capable of communicating with other agents, and perform parallel machine learning in a decentralized fashion.

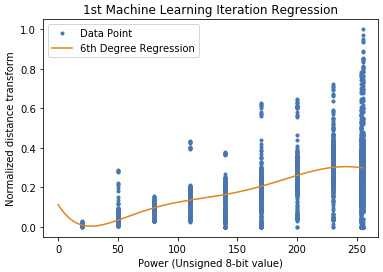

The functionality of an autonomous ship can be divided into two obvious states: sailing and docking. In the sailing state, a machine learning agent is responsible for multi-objective optimization where the agent learns to select an optimal load (power usage) that minimizes energy consumption and maximizes the distance transform. Respectively, in the docking state, the main objective of learning is to find the optimal load that minimizes the docking time, avoids collisions in the harbor and minimizes the risk of moving past the harbor.

Both these state-specific machine learning tasks are implemented by utilizing regression, a supervised learning technique. In regression learning, an agent learns to find the degree and the parameters of the best fitting regression model by utilizing least squares estimation.

Fig 3: An example of the best fitting regression model

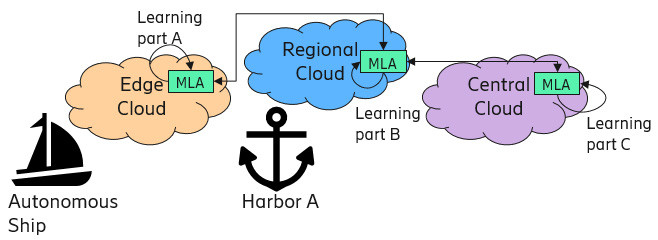

Decentralization of machine learning takes place if an agent is unable to perform machine learning in its cloud-based environment. The agent needing assistance requests help from other agents in the proximity. If there are agents that can provide assistance, the requesting agent informs them about the learning objective and divides and distributes the learning process in feasible parts to be processed decentralized. When the agents have finished decentralized learning, the requesting agent aggregates the learning results and optimizes its overall performance.

Fig 4: An overview of how the agents decentralize machine learning by utilizing resources from the distributed cloud environment

Conclusions

Based on our measurements, we discovered that the machine learning agents managed to extract useful information from the data. By making use of the extracted information, the decentralized machine learning agents are able to minimize energy consumption, minimize docking time, and provide reliable, collision-free docking. Moreover, decentralization of machine learning enabled learning in even more constrained environments.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.