Network performance and the metaverse: Can 5G deliver what’s needed?

For the metaverse to succeed it’s important that extended reality (XR) i.e. augmented reality (AR) and virtual reality (VR) devices are small, light, and even fashionable. Reducing the size, or the form factor of devices, is a real engineering challenge and is influenced by multiple factors.

Some of the most demanding processing functionalities of XR are currently performed locally on the device itself, which is very power consuming. Higher power consumption is synonymous with larger battery packs and, therefore, larger form factors.

One way to reduce the power consumption (and thus battery size and form factor) is to use edge/cloud computing to offload portions of the complex and demanding real-time application processing and computation. This is key to ensuring a faster market penetration of devices that support XR; and it may be even more important for metaverse-related services.

A further aspect is that most of the existing XR devices are connected via Wi-Fi today. These connectivity methods prevent a real mobile experience and thereby limit the possibilities within XR and metaverse use cases. Such use cases will require an everywhere connection that is limitless and seamless.

5G networks will play a central role in enabling both future and fully mobile XR and metaverse services and experiences. However, understanding how these services behave and how they are fundamentally different from traditional mobile broadband services is vital as a first step. This step will consequently unveil the new network requirements which will, eventually, lead to the identification of potential network challenges.

In this article, we focus on metaverse applications which rely on cloud-enabled VR and AR. Interestingly, these XR services have similarities with currently operative services such as cloud gaming. Understanding all these services is essential to be able to dimension 5G and future networks, and also to develop the right features which can meet the requirements of advance XR services.

Through different test cases, we can aim to understand how cloud gaming and AR services behave, identify their traffic characteristics and, ultimately, the requirements they set over 5G networks and beyond.

Our data reveals several new key traffic characteristics

All future XR and metaverse services will rely on real-time image processing for rendering or virtual object generation. In traditional video streaming, traffic is generated without tight latency requirements and is mainly dependent on the content dynamicity with few interaction requirements.

The metaverse on the other hand is not only immersive but also interactive. It is thus expected that the traffic will be very dynamic due to the users’ head movements and gestures in addition to changes of virtual environments based on real-time user control feedback.

We performed several measurements for cloud-based gaming where a user plays a graphically intensive game on a device, and the game itself is executed on a cloud game server where the audio and video is streamed to the device.

While common traffic challenges have been extensively discussed in open literature, our measurement studies revealed several new traffic characteristics which should be considered in further network optimizations and designs:

- Dominant video traffic: The downlink traffic is dominated by the video stream. The video frames are sent in either one single burst or paced out in several smaller bursts that should be handled together in a network. An adaptation in frame rate is not commonly seen but was observed in one application in a poor radio condition.

- Multiple traffic flows: The user datagram transport protocol (UDP) is commonly used, and it is common that several data streams of video data, audio data, and different types of control information share the same UDP connection. This implies that it is hard at link level to distinguish the different data streams from a network perspective. In the uplink, several data streams also share the same connection.

- Rate adaptation dynamicity: In the case of lower channel capacity, the applications adapt to the lower bitrates but do this in different ways depending on the application. Some applications are very quick to follow the given channel and constantly try to re-claim any free capacity and to push up the rates. Other applications instead focus on stability and adapt to the lowest perceived bitrate over some period (up to 30 seconds).

- Latency variation and application dependency: Latency variation is typically handled by jitter buffers on the application client side. However, this is handled differently depending on the application. Some applications focus on the stability achieved with an increased jitter buffer, while others focus on game responsiveness with an increased sensitivity to variations in the network delay. Therefore, a network latency jitter should be minimized by considering a different client jitter buffer capacity.

Impact of network offloading for AR

AR is an important wide-area use case. Its future though is not yet fully clear due to uncertainty around the network offloading architecture, the expected end-user target quality, and the application latency budget. In the following, we analyze those aspects and quantify their impact on 5G networks.

Many application functionalities in AR should work in a harmonized way to render images based on interactions between physical and virtual environments. To this end, several functionalities play a crucial role in AR applications: object detection, localization and mapping, rendering, 3D reconstruction, among others.

Specifically, object detection (OD) and simultaneous localization and mapping (SLAM) are identified as two key process enablers for AR network offloading to be processed at the edge. SLAM provides 3D positioning of devices as well as a map of the environment. OD handles the identification of objects in the environment, providing the object category and its location in the frame.

A visual-inertial state-of-the-art SLAM algorithm is decoupled into three sub-processes to allow for different levels of offloading:

- Localization (L): the localization module computes the movement of the device at each step based on sensor data at a high rate; it provides positioning in real time and continuously compares the acquired data to the map of the environment to correct the odometry drift, at a lower rate.

- Mapping (M): the mapping module stores key environment landmarks identified by the localization module and allows correcting positioning drift if landmarks are seen multiple times.

- Map optimization (O): the map optimization sub-process runs at a very low rate compared to the other processes and optimizes the estimated poses of the device and landmark locations according to all constraints found in the two previous modules.

The object detection application comprises two main processes: an object detector (D) and an object tracker (T). They can be distributed between a device and an edge cloud:

- Object detector: Deep neural network (DNN)-based estimation of objects in an image. It can be a heavyweight object detector (a priori accurate, slow and resource-hungry inference due to a complex DNN) or a lightweight object detector (a simpler DNN, faster and less resource demanding inference, but less accurate estimation).

- Object tracker: fast and fairly accurate estimation of previously observed objects in new images. From the described processes, this is the less resource demanding one.

The sub-processes to offload are chosen for versatility in scenarios with worse network performance and/or low availability of computing resources at the device. Depending on the detailed subprocess, we have three different offloading modes: low, mid and high.

Quantifying the impact of AR cloud offload

Our measurement studies aim to evaluate the impact of offloading a number of sub-processes in terms of device power consumption as well as the bit rate requirements for the 5G network. In addition, the studies reveal the impact that quality of experience has over the network latency requirements.

In general, a high network offload is desired for XR devices since it allows reducing the computational and processing burden at the device, and thus helps reduce its power consumption.

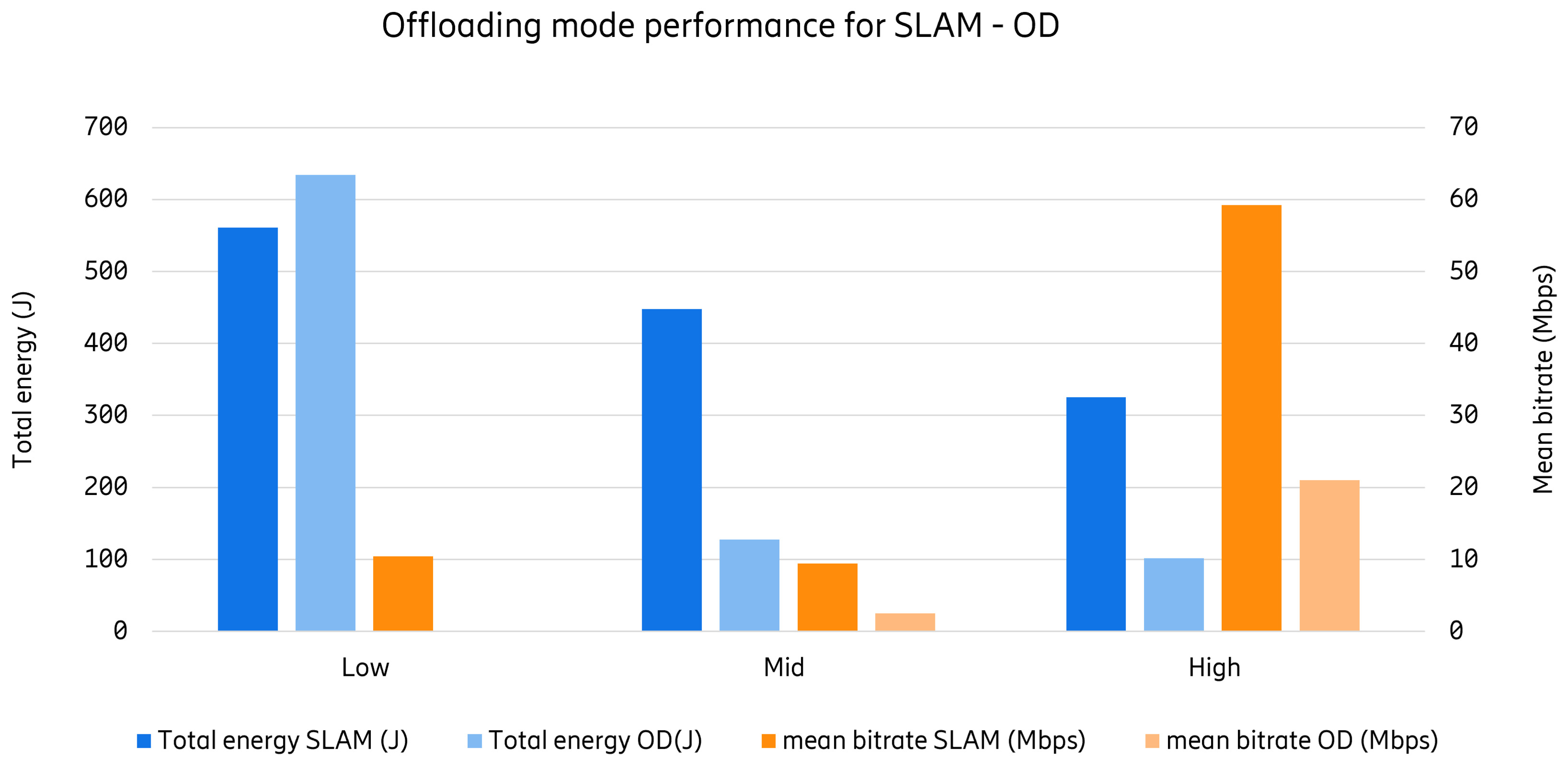

The accompanying figure illustrates the total energy consumption due to offloading parts of the SLAM and OD processes to the cloud. The observation window over which the energy consumption was measured was around 100 seconds for all scenarios. Higher offload results in lower energy consumption in both applications; however, it requires higher data rates since more communication between the edge processing and the device is needed.

Total energy consumption and bit rate requirements in different offloading levels

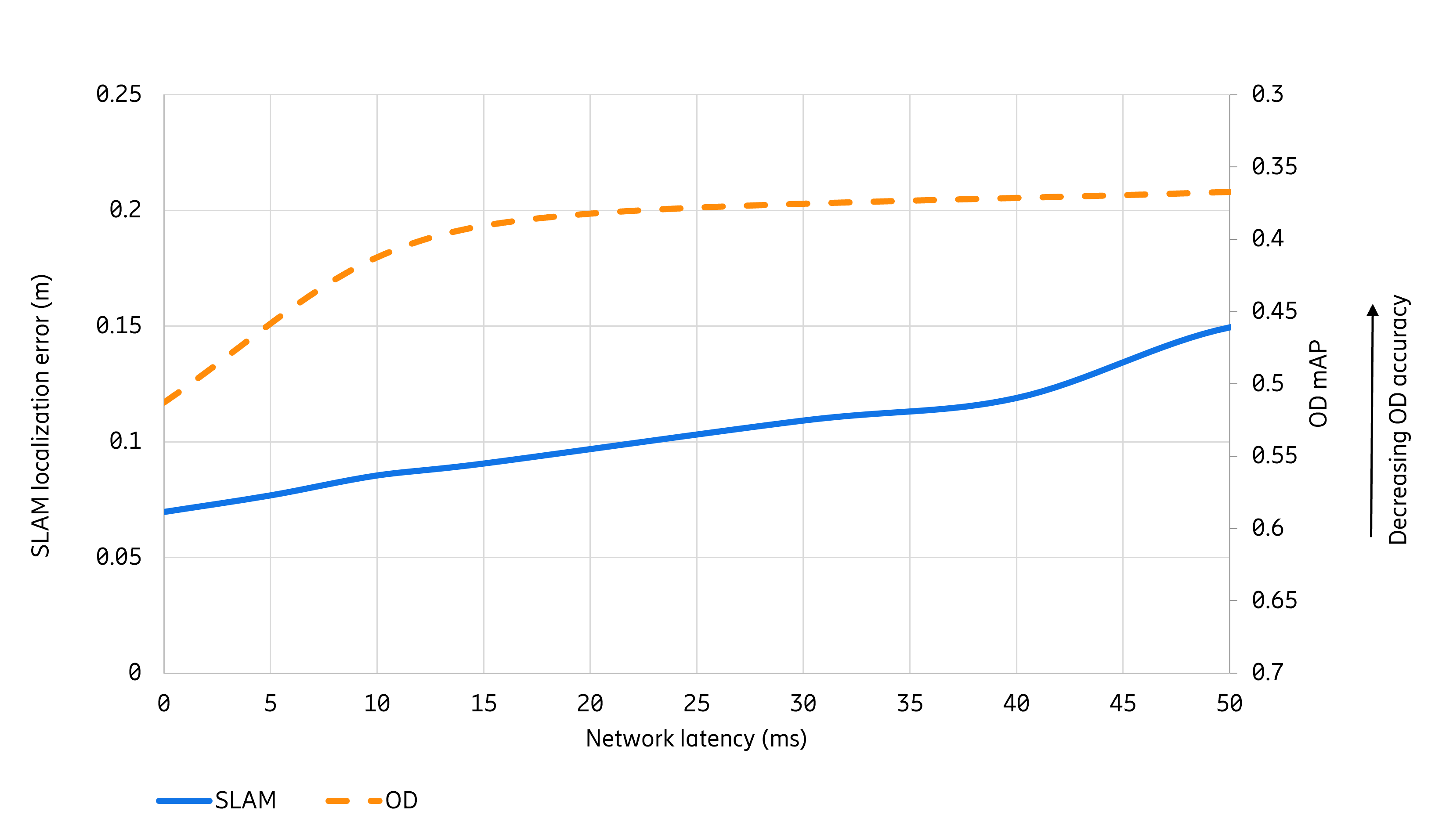

Another important aspect to be considered is network latency. The network latency requirement will be very dependent on the expected end-user quality of experience. We quantify this in terms of the localization error for SLAM (in meters). The accuracy of the OD process can be measured with the mean average precision (mAP) metric. The better accuracy is achieved when mAP is closer to the value of 1.

The accompanying figure shows the sensitivity of the user’s experienced quality according to the one-way network latency between edge and device. The more accurate the location process in SLAM and the better the standard quality object detection process in OD application, the lower the latency requirement is for the network.

Network latency vs. SLAM and OD quality in a given high offload scenario

Acceptable location error and standard target quality may vary from application to application and expected end-user experience. They are also likely to be different in the future. Nonetheless, a clear trend can be observed: smaller location errors and higher target qualities result in more stringent latency requirements in the network.

Thus, 5G networks should be prepared to continuously support lower latency according to rising expectations for AR user experience.

Impact of non-network related XR processes

Another of our AR measurement studies evaluates how the non-network latency components affect the network latency budget. We have concluded that the network latency budget is also heavily affected by non-network latency components.

Non-network latency originates from XR layer functionalities such as SLAM, object detection, encoding/decoding, rendering, among others, as well as application layer functionality, for example, gaming functionality.

In our study, the application consists of a cloud game server and game clients on devices. The XR layer also includes environment detection, which creates a virtual representation of the real world in the game server's virtual environment. Certain sub-processes are offloaded, matching the mid-offload scenario in which the environment detection is split into object detection and object tracking. In this approach, the upstream video encoder, decoder and object detect components are not part of the latency critical path.

In general, the more latency is consumed by applications, the less latency budget remains for the network at the same end-user target quality. The table below shows a latency contribution in device and edge processing. It is clear that SLAM, object detection, rendering, and video encoding/decoding contributes the most to latency, resulting in the network latency budget becoming even more stringent.

In addition, the latency varies between components since it is dependent on object complexity and hardware capabilities to handle the complexity. The network latency should therefore be robust to varying application latency budgets. Note that in the table the video decoding and object detect latencies do not represent latency optimized implementations.

| Component | Latency | Location |

|---|---|---|

| SLAM | 50-70ms | AR device |

| Object tracker | 2-5ms | AR device |

| Game simulation | 2-5ms | remote server |

| Rendering | 2-16ms | AR device |

| AR device | 7-8ms | AR device |

| Video Decoding | 12ms | remote server |

| Object Detect | 250ms | remote server |

Various latency contribution from XR application functionalities

Addressing emerging XR network challenges

The results above confirm that numerous XR networking challenges need to be addressed in the coming years.

A very important one is to ready the 5G networks for XR traffic, which is substantially different to mobile broadband services. To aid operators around the world with the emerging XR original equipment manufacturing (OEM) ecosystem, a globally harmonized XR 5G Quality of Service identifier (5QI), data radio bearer (DRB), protocol data unit (PDU), and slice configuration ought to be strived for. One desired configuration differentiates via a separate slice which offers a complete traffic separation and thus potential separate billing and easier RAN and transport network partitioning, as well as separate user plane function (UPF) breakouts. This can be desirable from a business and deployment point of view. In addition, a possible edge deployment configuration is an important aspect. For AR in wide-area public networks, it becomes apparent that without having a sufficiently densified network in terms of RAN and multi-access edge computing (MEC) with an optimized network configuration, wide-area and mobility-supporting XR will be difficult to deliver.

Furthermore, standards also need to evolve. The 3GPP mobile industries currently address network protocol enhancements for future XR applications. Our results indicate that 3GPP is taking fair traffic characteristics assumptions and moving in the right direction, and its findings and enhancement areas are relevant to better support large range of XR applications and network requirements.

Today, 3GPP is working on three important areas: radio network capacity, device power savings, and XR awareness. Radio network capacity and device power savings are rather known topics in 3GPP. However, XR awareness is a rather new concept in 3GPP. “XR awareness in RAN” means that the RAN gets information about the XR applications and its traffic characteristics which, if known by the RAN, can assist the RAN to increase capacity and increase power saving gains. For example, knowing the application PDU (set) size, or packet delay budget (PDB) for a given PDU set, can allow the network to schedule resources in a better manner and increase capacity. Similarly, obtaining the periodicity of the different flows and jitter information can assist the network to select suitable discontinuous reception (DRX) configurations.

It is clear that the requirements on the network will considerably depend on the traffic characteristics, the offloading scenarios, and user target quality. Traffic characteristics as well as requirements may also vary from application to application and even within a session in the same XR application. It is therefore relevant that the network knows the most important characteristics from the application and traffic to be able to better manage its own resources and, this way, meet the requirements for the specific application.

Enhancements in all these areas aim at having new XR specific features to enable higher degree of adaptability and intelligence in the network to cope with future and more demanding 5G XR applications and offloading scenarios.

Summary and what’s next?

XR and metaverse services come with the promise to be a game changer for mobile communication. One of key challenges for today’s XR devices is the form factor, i.e. its size. The size of batteries limits how small devices may be; therefore, reducing device power consumption is essential to unleash that promise.

Offloading computational processes has been proven as the most effective way to reduce the device power consumption. Mobile networks can provide the connectivity to allow edge computing and, therefore, offloading processes to the cloud. Moving functionality to the cloud does not by itself impose any new requirements. Requirements will be specific to each XR application, its algorithms, its processes and functions, offloading schemes, and end-user target quality. Thus, XR requirements could not be defined by ‘one magic’ number which fits all applications and scenarios.

This fact sets a challenge for 5G networks. Thus, it is crucial that operators will need to strategically assess the specific XR services and applications leading to particular requirements that they want to support. 5G evolution should also move in the direction of having XR-awareness in the network and RAN features, e.g., ones outlined here and its enhancement in 3GPP Rel-18, which allow the required flexibility, adaptability, and intelligence to be able to meet the specific requirements.

Explore our other metaverse posts

Take a tour of the top twelve metaverse use cases, one use place at a time!

Find out why the metaverse needs 5G to bring disruptive VR, XR, and Web 3.0 to life.

Will metaverse universities be a thing of the future? Learn how XR can transform education through the metaverse.

Learn more about XR

Find out the technical requirements to enable large-scale XR by introducing time-critical communication capabilities in 5G networks.

Explore our research journey toward a new world of possibilities based on immersive virtual experiences.

Learn why XR is changing how we consume entertainment.

Welcome to a world of immersive experiences with XR.

Authors

Application & Traffic Analyst at BNEW NSV BB Analytics

Experienced Researcher at Ericsson Research

Master Researcher at Ericsson Research

Experienced Researcher at Ericsson Research

Experienced Researcher at Ericsson Research

Ecosystem Co-creation Director at Ericsson

Chief Architect at Ericsson Inc. in Silicon Valley

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.