Effective and explainable AI – a use case of false base station detection

XAI and novelty detection

The research field of explainable AI (XAI) addresses one of the crucial aspects of AI trustworthiness: improving the transparency of decision making.

The term used for the results of applying XAI to an ML task is explanation. In this blogpost, we will describe how XAI can provide trust by explanation. You’ll also learn about an effective novelty detection method that provides explanations along with detections, without the need for additional computations.

One common application of ML/AI-based solutions involves novelty detection, where a model is trained on data representing normality, in order to predict whether new data resembles the known patterns or is something entirely different. In professional language, the training data is defined as one class, and the model predicts if new data belongs to the same class or not.

The group of use cases that rely on novelty detection is one example of where XAI can improve the trustworthiness of the solution. In many cases, highlighting the feature values that contribute most to triggering an alarm in a novelty detection system can aid in determining the severity of the alarm.

An example from the image data domain is perhaps the easiest to visualize. Consider a novelty detection implementation, as shown in the figure below.

Figure 1: Example of novelty detection on a set of images of sweaters.

The figure shows a situation where the purpose is to identify images of sweaters and detect those that depict something else. Some valid sweater images could be missed because they deviate due to background, noise, or lighting conditions caused by circumstances of the image capturing device. Revealing the pixels that had the most impact on the ML model’s decision can help improve the deviation detection in a refined evaluation.

For other novelty detection use cases, the situation may be similar. Understanding which features had the greatest impact on detection can significantly enhance the robustness and reliability of the novelty detection solution.

Research on XAI applied to novelty detection has focused mainly on insights into model predictions, as in the example above. The published methods can be divided into two categories:

- methods that are agnostic to the novelty detection method they aim to explain

- methods that are tailored to give explanations for a specific novelty detection method

To explain the reason for a particular data sample being detected as a novelty, one of the common agnostic approaches is to approximate the complex model by a simpler, explainable model for small deviations from the sample. Popular examples include Local Interpretable Model-agnostic Explanations (LIME) and various solutions based on Shapley values.

XAI for tree-based novelty detection

We previously presented a tree-based method, the Anomaly Detection Forest (ADF), for novelty detection and used it to detect anomalies in network traffic. Despite its relative simplicity, this method provides solid novelty detection performance on many types of data where the progress of deep learning methods has been slower than for image, audio, or natural language processing. We previously also developed a specialized method to explain individual predictions of an ADF model but, as with the agnostic methods mentioned above, it is a post-process that adds additional computational overhead.

In our recent work, we decided to investigate if we could create a novelty detection model using explanations as a starting point. The result is a new algorithm that provides competitive detection accuracy and outputs explanations for detections without extra computational effort. A more detailed description of this method can be found at the end of this post.

XAI research evaluation

One challenge for the research community around XAI and novelty detection is the difficulty in comparing the quality of explanations. While it's known which samples in the test set do not belong to the training class, the reasons for their class adherence are often unclear or insufficiently specified. Because of this, research results for XAI methods have often been presented qualitatively. For quantitative results, one approach has been to manually alter records in some features to obtain data with known explanations of the novelties. However, there are still very few public datasets with a published common ground truth for explanations.

Network-based false base station detection - a use case

False base stations are devices that impersonate legitimate base stations for malicious activities such as unauthorized surveillance or sabotage of communication. Being able to detect such activities helps increase the trustworthiness of the network.

Using data from 3GPP standardized measurement reports, it is possible to build a novelty detection model of the expected signal strengths of neighboring cells for each serving cell. By using such a novelty detection model in the past, we have shown that it is possible to detect unexpected values for the signal strengths of surrounding cells caused by the presence of a false base station. We published a full paper on this titled Applying Machine Learning on RSRP-based Features for False Base Station Detection in the Proceedings of the 17th International Conference on Availability, Reliability and Security (ARES ’22). Read the abstract here.

Now, let us describe how we conducted experiments to test our solution.

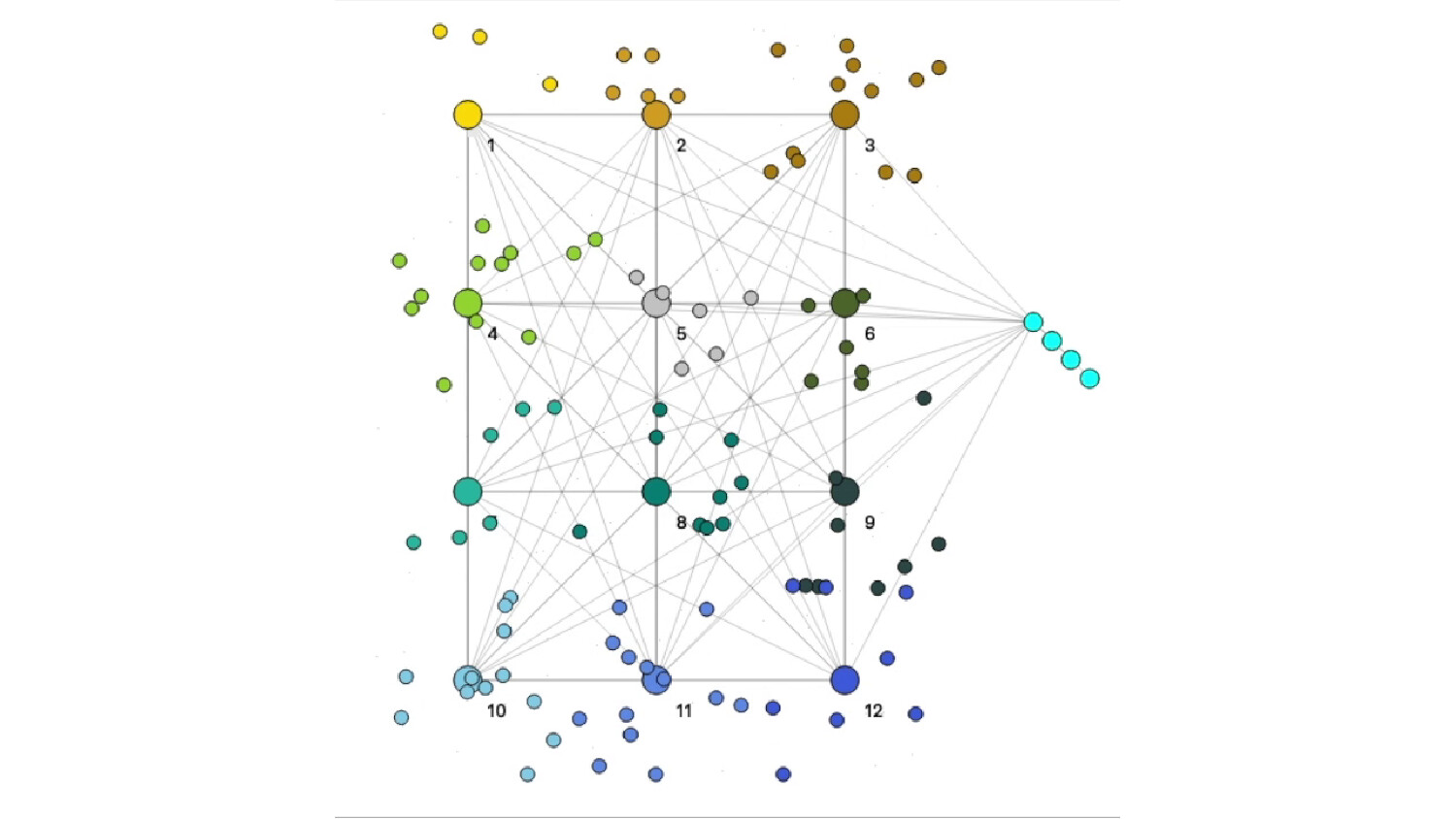

We collected the measurement report data from mobile phones in the vicinity of a false base station, creating a simulated environment with 12 base stations in a grid as shown below.

Figure 2: Animations of mobile phone base station connectivity in a simulated grid with and without a moving false base station

In the figure, the larger colored circles with numbers are base stations and the 200 small circles represent mobile phones currently connected to the base station with the same color.

For the simulation, we let one of the 12 base stations act as a false base station. When acting as a false, the base stations were configured to not allow handovers and they were moved to various positions along the red arrows in the figure. With the 12 different base stations present in the grid, this gave us 12 different datasets, each containing one false base station. The mobile phones were configured with a so-called random walk model and were moved around the grid at a constant pace, changing to a new randomly picked direction every 20 seconds.

At regular intervals, mobile phones send measurement report data to the base station they are connected to. Each of these reports contains the signal strength and quality from base stations that are within range of the mobile phone, in addition to the values for the base station it is connected to. These data points are then sorted according to receiving base station, and one model is trained for the signal strength environment around every base station.

In the simulated environment, we always know which base station is the false one. Therefore, any measurement corresponding to a false base station in a record is known. This presents an excellent opportunity to provide quantitative experimental results in the form of explanation accuracy.

Our experiments were conducted as follows:

- We trained one model for each base station with the data from the individually connected mobile phones.

- For each of these models, the data point features include the signal strength of the model base station and the signal strength of the surrounding base stations visible to those mobile phones.

- As we know the feature corresponding to the imposter base station, whenever that feature is present in a report, we can calculate the precision of the explanations provided by the evaluated novelty detection models.

The figure below illustrates a comparison of explanation accuracy for this dataset between our method, ADF Feature Contribution (ADFFC), and other recently published methods for explaining outcomes in tabular novelty detection.

In many cases, explanations can enhance the trustworthiness of acquired predictions. Just as in the case of the false base station simulation use case, explanations can also facilitate subsequent processing, consequently resulting in improved system performance. It's a widely recognized outcome in machine learning that an ensemble of diverse models can enhance prediction accuracy for the same dataset. In the context of false base station detection, the explanations derived from various base station models can similarly be combined to reinforce the identification of a false base station.

The figure below shows the explanations for data samples that include measurements from base station 9 when it is operating as the false base station. During the training process, measurements from base station 9 are exclusively present in the dataset used to train models for base stations with IDs 3, 5, 6, 8, 11, and 12. Therefore, we only present results from these models, color-coded by ID (as detecting a false base station with an ID not present in the training data is straightforward). The figure makes it evident that the identification of 9 as the false base station is significantly reinforced by the collective outcomes of different models.

Combined model results

However, for individual models, as seen in the case of 6 and 12 in the figure, the results may not be as definitive. The explanation of the detected novelty in such cases enables the correlation of detections across models and illustrates that the pursuit of transparent model decisions can also contribute to enhancing the accuracy of the detection task itself.

Given the current rapid pace of automation and the deployment of ML/AI models in telecom networks, it's crucial to focus on the trustworthiness and reliability of these systems. In this blog post, we have shown a use case where XAI not only instills trust through explanation but can also enhance the accuracy of the system. Furthermore, by focusing on the explainability of our novelty detection algorithm, we've discovered its potential for detection as well. The outcome is an effective novelty detection method that delivers explanations alongside detections, all without the need for additional computations.

Exploring novel scoring with ADF—a deep dive.

In the ADF, the training process creates leaves, called anomaly leaves, which correspond to regions of the input space not encountered during training. During inference, when a data sample traverses a tree in the forest (ensemble) and lands in one of these leaves, it indicates novelty. The creation of such a leaf during training signifies the absence of training samples within the space defined by the features on the path leading to that leaf, as depicted in the figure above.

For each leaf, we define the explanation as the set of features along the path to the leaf that render it devoid of training samples. Since this explanation is known at the time of training, it can be incorporated into the leaf as part of the training process. When performing novelty detection on a sample, the explanation for the novelty is constructed by aggregating all such explanations from the trees where the sample ends up in an anomaly leaf. The proportion of trees in which this occurs yields a straightforward yet effective detection score. Consequently, this method enables the simultaneous acquisition of both detection results and explanations in a single pass for each sample within each tree.

Final comment

Trustworthiness of AI systems is a hot topic. An increasing number of important systems rely on AI functionality. Shifting focus from the accuracy of an ML-based system to transparency is an important step in enhancing trust. We have presented an example of a use-case where this shift resulted in a solution that is both better at outcome explanation and provides higher accuracy with improved efficiency.

Learn more

Find our latest security research

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.