Supporting AI-driven mobile applications with a 6G AI compute continuum

- Extending the emerging 6G network platform with integrated compute and AI services will enable service providers to capture the AI value chain, ensuring security, privacy, regulatory compliance, and application quality of experience.

- Hosting and managing AI models in the network simplifies application deployment, accelerates time-to-market, and creates value across an ecosystem of developers, enterprises, and end-users.

6G will offer services that go beyond communication, extending and expanding the 5G network platform with services that include information, computing, and artificial intelligence (AI). Dynamic device offloading is one such service, a compelling compute service that moves workloads from the device to the network to improve user experience and save device battery.

We showcased this concept in our 6G booth at Mobile World Congress 2024, featuring an environment-aware augmented reality (AR) avatar that communicates on a lightweight head-mounted device and offloads a computationally heavy object detection task.

Differentiated connectivity provides different levels of network performance based on use case, device, or application – rather than best-effort for all. Dynamic device offloading extends differentiated connectivity with compute capabilities, thereby adding more value to the device and application ecosystem. It will ensure optimal experience and performance also when situations change or applications move across networks.

Dynamic device offloading is often highlighted in AR and extended reality (XR). Our continued research prototypes demonstrate that it is equally relevant to most device types and a wide range of applications. Examples we have explored are a smartphone-based smart city app, search and rescue with 4-wheeled robots or drones, connected vehicles, and enhanced safety operations of mobile robots.

Offloading and AI

The prototypes described above demonstrate that the computationally heaviest tasks, which would benefit most from offloading, are typically AI inference-related. Some examples are real-time visual object detection, natural language processing, decision making, as well as control and content generation. With the offloaded tasks being AI-based, the requirements change significantly.

Unlike traditional workloads, AI requires hardware acceleration technologies, for example, graphics processing units or tensor processing units (GPUs and TPUs). AI workloads often generate large volumes of multimodal data and have stringent latency, privacy, and contextual requirements. In the future, it is projected that generative AI (GenAI) traffic will significantly contribute to overall mobile traffic growth. Offloading AI tasks enhances performance but also introduces new requirements for orchestration and data handling.

To meet these demands, differentiated connectivity and computational offloading can be extended with AI model management. The service would include hosting, executing, and optimizing, and presents an opportunity to monetize the rise of GenAI workloads. Communications service providers (CSPs) can simplify integration and reduce complexity for customers by acting as a single trusted point of contact.

6G AI compute continuum

We call this concept the 6G AI compute continuum – a way to take advantage of the distributed compute and AI capabilities forming the base of mobile networks, from the far-edge to the cloud. It can dynamically decide where to run an AI workload based on requirements.

CSPs have the potential to use their network infrastructure to offer AI services and tap into new revenue streams. They bring unique strengths such as a distributed network footprint, guaranteed quality of service (QoS), strong security and compliance with national regulations, and real-time network intelligence. These strengths underpin capabilities such as efficient computing, trust, privacy, sovereignty, and access to network and data insights. Building on these value propositions, CSPs can adopt market-specific models, shape ecosystem standards, and therefore deliver localized, compliant, and high-performance AI services.

CSPs have a vast network of assets to offer network-integrated computing. Depending on the application requirements — for example, in terms of access latency, preferred computing hardware, or data privacy needs — this can be provided through either shared or dedicated compute infrastructure. The resources can be located at different sites, farther out in the network (far-edge) or in more centralized locations closer to the core (near-edge).

High-potential use cases

We have identified a set of high-potential use cases for CSPs in the 6G AI compute continuum.

Industrial use cases:

- Transportation and logistics: Supporting connected vehicles with real-time edge decision support and AI-based situational predictions.

- Manufacturing and industrial settings: Real-time robotics, AI-driven quality control, predictive maintenance, and logistics.

- Drones and unmanned aerial vehicles (UAVs) in search and rescue: Utilizing low-latency edge AI and reliable connectivity for mission-critical operations.

- Smart cities and grid stabilization: Real-time analytics and rapid response using secure local processing and anomaly detection.

In these cases, CSPs add value by offering easy integration, acting as a single point of contact, providing cost efficiency, and combining managed connectivity and AI hosting services.

Consumer use case:

- Smart glasses: They enable list management, intelligent reminders, real-time product information, visual search, and navigation. AI tasks are dynamically offloaded across devices, edge, and cloud to optimize latency, privacy, and cost. CSPs utilize the network–cloud visibility for secure orchestration and intelligent task placement, allowing immediate on-device or regional processing when needed.

The following AI shopping assistant (Figure 1) is an example of an AI application transforming consumer experiences.

Figure 1: Shopping assistant use-case and ecosystem involvement

The AI shopping assistant showcases how CSP platforms support value exchange among stakeholders. By acting as platform providers, CSPs enable a broader ecosystem where different stakeholders can interact and co-create value (see Figure 2).

Figure 2: Shopping assistant ecosystem involvement

For AI developers and application service providers (ASPs), the platform offers access to distributed compute resources optimized for AI workloads, enhancing applications through real-time network intelligence through standardized APIs. Enterprises gain secure, sovereign edge solutions, especially in regulated industries. End-users benefit from personalized, real-time AI services. AI model providers benefit from the CSP edge as a compliant distributed network for foundational and specialized models.

The value proposition of the AI compute continuum

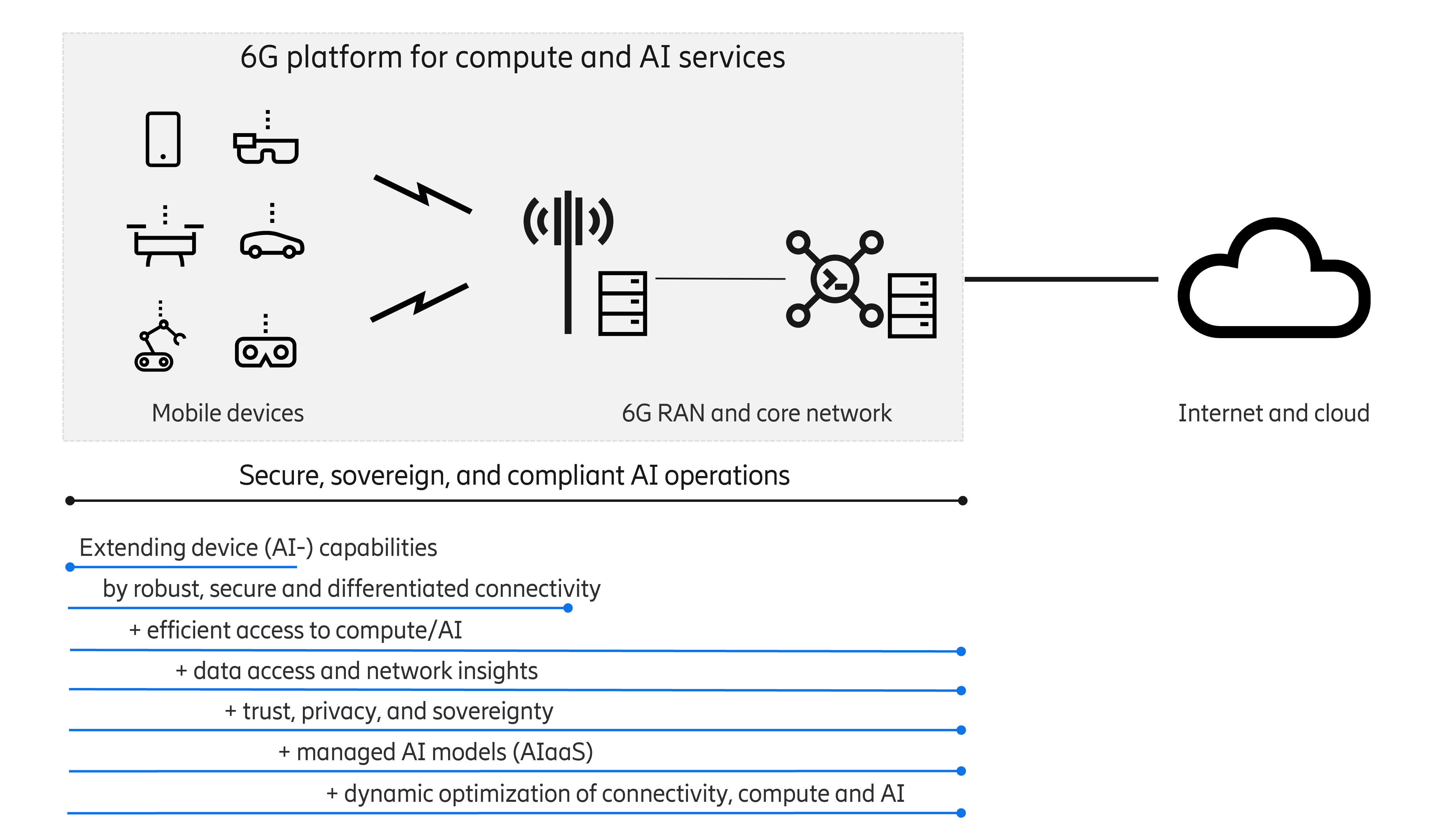

For CSPs, taking a significant role in the AI compute continuum makes it possible to unlock new revenue streams and a unique value proposition beyond usage fees. Figure 3 illustrates the possible role and value proposition of a CSP in the AI compute continuum.

Figure 3: 6G AI compute continuum value proposition

The figure starts with the proven benefits of extending the device capabilities by offloading selected parts of mobile applications. These include advantageous device behavior that enables slimmer devices or longer battery life, which is made possible by reducing energy consumption and heat generation. Not only are device physical features improved, but also the application experience can become smoother due to the superior computing capacity at the remote site.

How does the technology work within networks?

Managing AI workloads within the network allows direct access to network data and insights. Relevant network data for mobile applications includes connectivity maps, predictions, and data from emerging 6G information services, such as positioning and sensing data. These resources support use cases such as immersive augmented reality/extended reality (AR/XR) experiences or autonomous navigating robots/drones.

Furthermore, fresh and detailed network session metrics can help to optimize the application quality of experience while safeguarding privacy. This can be done by split learning methods based on vertical federated learning. This approach requires a distributed model architecture with nodes embedded in the network. However, it removes the need for data, model, and feature sharing between the distributed modules of the mobile application.

Trust, privacy, and sovereignty

Combining AI compute infrastructure with connectivity gives the advantage of having a single point of contact. Most of the enterprise customers already do business with service providers; for them, this approach is a trustworthy, secure, and privacy-preserving solution.

One promising strategy is to extend telecom-grade security and identity mechanisms currently used for mobile device authentication and data traffic encryption to the AI service layer. This approach enables service providers to utilize existing SIM or eSIM credentials, such as international mobile subscriber identity (IMSI) certificates or the private keys contained within them, to not only authenticate mobile devices but also encrypt data traffic end-to-end, without separate authentication or data traffic encryption mechanisms.

From an enterprise perspective, a single point of contact simplifies vendor management and reduces the cost of integration compared to traditional approaches.

Another aspect benefiting from these managed services is regulatory compliance. Telecom-grade systems already include audit trails, monitoring, and reporting mechanisms, which provide the necessary transparency for regulators to monitor relevant aspects of mobile network operations. Service providers can offer similar monitoring tools to enterprise customers from regulated industries, such as government or finance. Reusing these built-in capabilities for their applications helps the vendors to meet their own regulatory requirements without having to spend resources on developing these monitoring tools.

For example, consider an automotive manufacturer or fleet manager with many connected vehicles that access remote AI functionality. This could be for model-controlled vehicle operation or a chatbot that allows the vehicle and its occupants to converse with each other. These models can be developed securely within operator premises. Service providers can ensure compliance by deploying these models in different regions, near the vehicles, as required by local regulations. They can encrypt traffic using existing credentials already included in the enterprise’s mobile subscription and manage the service end-to-end to provide QoS guarantees.

From an enterprise perspective, this means a faster time-to-market, as well as enhanced security and trust, since the application owner does not have to engage multiple vendors, such as hyperscalers and data transport providers, and therefore does not incur the costs of integrating with the services these vendors provide. Moreover, as the fleet grows or new, more capable AI models are introduced, the service provider dynamically allocates computing resources and onboards the new vehicles, hiding the complexity from the enterprise vendor.

Managed connectivity plus AI model service bundle

Another advantage of service providers managing both the compute or AI domain and network connectivity is the ability to jointly optimize resources, something that would be challenging in a fragmented approach. This is because mobile network service providers and hyperscalers are unwilling to share the operational data and key performance indicators (KPIs) required for such optimization, nor are they likely to grant a third-party authority the control necessary to orchestrate across both domains. For example:

- AI models can be dynamically reallocated based on traffic, mobility patterns, and user equipment (UE), such as priority, latency, and throughput. This reallocation process requires knowledge from the mobile network domain to predict the locations of the devices using these models.

- On the other end of the scale, AI models can optimize the network behavior. For example, an AI model hosting infrastructure can detect usage surges of models and inform the network to allocate more bandwidth based on priority. This could be particularly true for GenAI models such as multimodal large language models, which generate large amounts of data of different natures.

We can also consider more advanced examples of joint optimization of network and cloud resources, leveraging each other’s information. In such cases, mutual exchange of information may lead to better performance than working in isolation. Consider the following scenarios:

- For events such as a concert or a football match, AI model hosting infrastructure can use the network domain information of device trajectories and service profiles to position larger and historically more heavily used AI models closer to the edge nodes that are likely to expect heavy load. Simultaneously, the network could prepare network slices and/or policy rules and allocate additional bandwidth on the downlink interface. This could be done proactively before the first devices arrive.

When multiple mobile devices access the same AI model, due to memory constraints on the cloud infrastructure, not all mobile devices can be served by the same instance of the AI model. At least some devices must be served from a model instance with lower quantization (that is, converting AI model parameters to lower-precision format). The lower quantization model sacrifices output quality for a reduced memory footprint, as the model’s weights utilize lower precision formats. Using information from the mobile network, such as subscription information from the user data management node and policy data from the policy control function, the cloud infrastructure can establish the relative importance of the devices based on priority. Based on this information, it can make a decision.

Figure 4 illustrates an example of an architecture where information is exchanged across domains, that is, the cloud domain management shares information with the network domain operations support system (OSS).

Figure 4: Cross-domain information exchange

In the cloud domain, Cloud management functions can migrate or deploy AI models of varying complexity closer to or further from the radio edge. Similarly, in the network domain, the OSS can configure policies, network slices, and radio access network (RAN) parameters.

In a nutshell

As 6G advances toward a more robust and differentiated connectivity, CSPs can use their distributed core- and radio access network (RAN) infrastructure to offer efficient AI compute capabilities. Integrating AI hosting with tailored, secure connectivity allows CSPs to offer a differentiated value proposition that goes beyond connectivity. Managing AI workloads directly within the network provides access to real-time network data insights that can enhance both application performance and intelligent service orchestration.

This combination of secure connectivity, localized AI hosting, and direct access to network intelligence allows service providers to deliver fully managed services that ensure latency, QoS, and full regulatory compliance aligned with national directives. These services enable new business models and revenue streams while simplifying integration by acting as a single point of contact, reducing complexity, accelerating time-to-market, and creating value across an ecosystem of developers, enterprises, and end-users.

Learn more

Blog post AI-as-a-Service blog post

Article 6G platform opportunities for applications

Explore more about Telecom AI

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.