Securing AI in mobile networks: 10 key considerations for telcos

- Artificial Intelligence will drive innovative use cases, service enhancements, and operational efficiencies in mobile telecommunication networks.

- The evolution to Agentic AI introduces an expanded threat surface and requires ten new best practice approaches to mitigate risk.

AI in mobile networks

Artificial Intelligence (AI) is a transformative technology that will drive innovative use cases, service enhancements, and operational efficiencies in mobile telecommunication networks. AI in mobile networks will enable intent-driven autonomous networks, facilitate advanced network optimization, provide dynamic resource allocation and predictive maintenance, and deliver differentiated connectivity. It is expected that AI, and specifically Agentic AI, will be a foundational technology for 5G Advanced and 6G systems.

The evolution of AI introduces an expanded threat surface with the shift from static to dynamic risks from autonomous Multi-Agent systems. It is the responsibility of telecom stakeholders to build a strong security posture for AI systems by implementing the AI-specific security controls described below. A prime example is Ericsson’s launch of Agentic rApp as a Service (aaS) with its built-in capabilities for secure AI in which Ericsson’s further maintains its security posture to support national security by securing critical infrastructure today1 and preparing to defend against the evolving threat landscape2.

Secure AI for mobile networks

AI data and models directly influence network behavior and service performance. Compromise of an AI system could impact network availability, performance, regulatory compliance, data privacy, and trustworthiness. It is crucial to secure AI systems within the mobile network. The United States National Institute of Standards and Technology (NIST) has identified security as a key characteristic of a Trustworthy AI System3.

AI enhances the capabilities of mobile telecommunication networks, but it also introduces three distinct considerations for cybersecurity:

- AI is a target for cyber threat actors to influence AI outcomes or steal information from networks. US NIST refers to the “Secure” Focus Area for securing the AI system4.

- AI is a tool to enhance security for mobile telecommunication networks. US NIST refers to the “Defend” Focus Area for AI-enabled cyber defenses4.

- AI is a tool used by cyber threat actors to amplify the efficacy of cyberattacks. US NIST refers to this as the “Thwart” Focus Area for protecting against AI-enabled cyberattacks4.

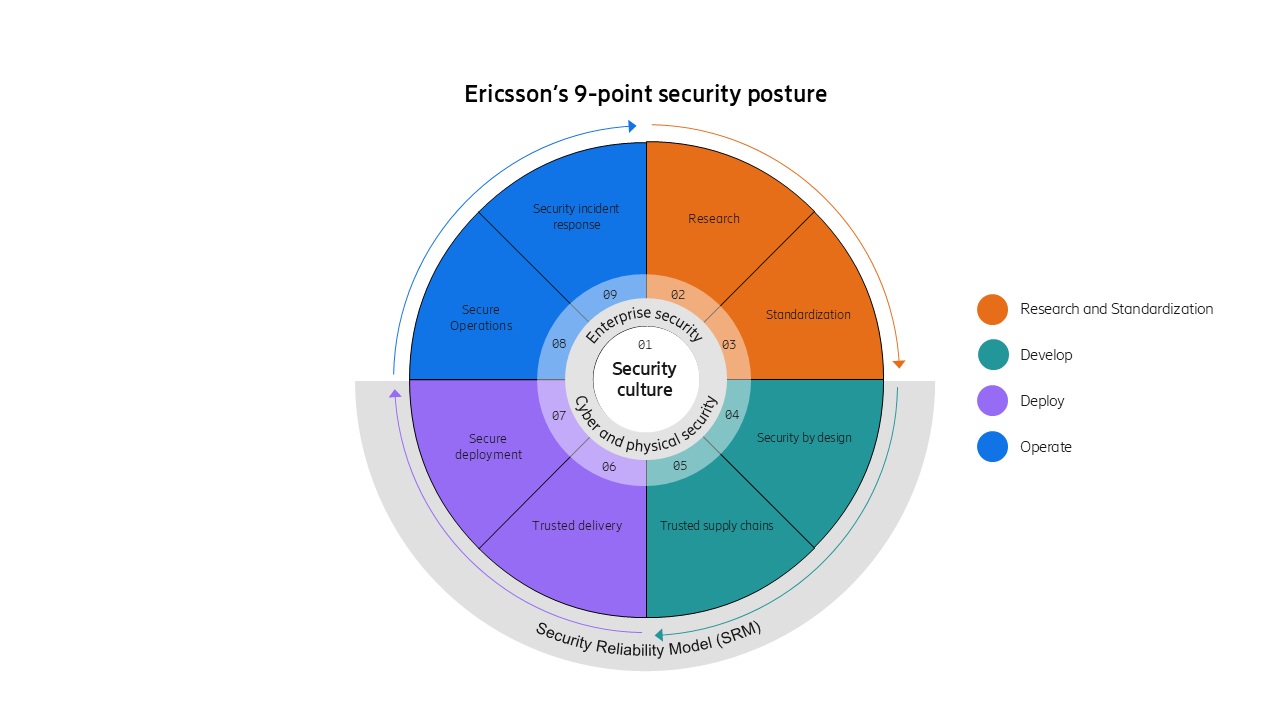

A strong security posture for securing AI is based upon the four-layer trust stack, defined by OECD5 with the Standardization, Development, Deployment, and Operations stages. Ericsson has adapted this model in its processes by implementing the 9-points across these 4 stages, as shown in Figure 1. Securing AI is also a commitment to these 9-points throughout the AI lifecycle.

Figure 1. Ericsson 9-point security posture covering the full trust stack for telecom security and based on the architecture of communication networks published by OECD (Organization for Economic Co-operation and Development)

Agentic AI expanded attack surface

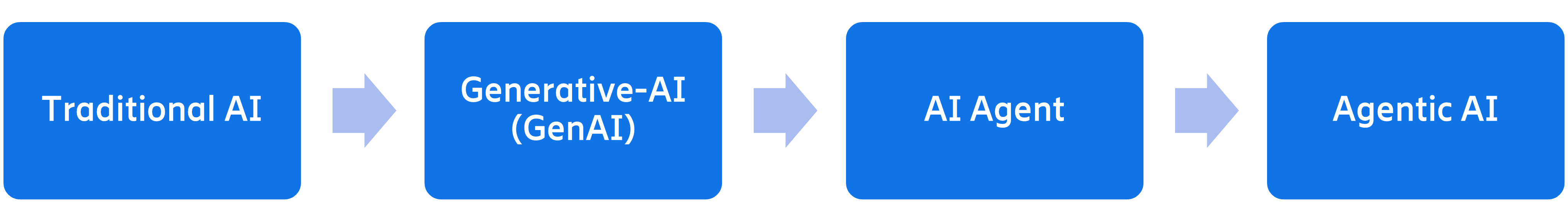

AI has evolved from Traditional Predictive AI (PredAI) to Generative AI (GenAI) to AI Agents to Agentic AI, as shown in Figure 2. PredAI is the traditional AI that analyzes data and performs statistical analysis to identify trends, offer forecasts, and predict future outcomes. Generative AI (GenAI) uses input data in the form of unstructured data, text, images, audio, or video to generate content in the form of new text, images, audio, and video. AI Agents have personas that can autonomously decide and act with perception, reasoning, decision-making, and planning capabilities. An AI Agent may use external tools called through APIs to enhance its reasoning capability and may also have reflection capability to update its perception and reasoning. GenAI and AI Agents may use Large Language Models (LLM) using deep-learning algorithms trained on large textual datasets to learn patterns.

Agentic AI is a system of multiple AI agents collaborating on complex tasks to achieve a goal in highly dynamic settings. Agentic AI is designed to autonomously achieve predetermined objectives and leverage advanced technologies to interpret intents and execute tasks. Agentic AI is the leap from humans guided by systems to systems guided by humans. In telecom networks, Agentic AI will facilitate network operations and enhance the user experience by enabling intent-based networking, autonomous network management, dynamic network slicing, and personalization of customer experience.

Figure 2. Evolution to Agentic AI starting from Traditional AI which predicts from existing static data, to Generative-AI that creates new content based on patterns, to AI Agents that are autonomous software systems and finally to Agentic AI with multiple agents collaborating on multiple complex tasks.

The evolution of Agentic AI introduces an expanded threat surface with a shift from static to dynamic risks. AI Agents are the new “Insiders”, with a defined persona that decides and acts autonomously. AI Agents inherit the risks of PredAI and GenAI, including Evasion, Poisoning, Inference, Privacy, and Supply Chain attacks, discussed in the next section, and introduce new threats inherent to Multi-Agent systems that can propagate faults and compound exploits undetected by humans due to their speed and scale. The continuous communication between AI Agents and external tools can be exploited for confidentiality, integrity, and availability attacks. AI Agents also introduce new supply chain risks due to the dynamically sourced components introduced at run time.

Key considerations and best practices for operators introducing Agentic AI applications

Open Worldwide Application Security Project (OWASP), in its December 2025 publication “Top 10 for Agentic Applications for 2026”6, provides excellent guidance for mitigating threats and vulnerabilities to Agentic AI systems. In its discussion of the top 10 threats to Agentic AI applications, OWASP also provides recommendations for best security practices:

- Implement Least Agency and Least Privilege: OWASP has defined Least Agency as “the practice of constraining an AI Agent’s autonomy and permissions to the minimum required to perform its intended tasks.” Least Agency addresses the unique dynamic and autonomous nature of AI Agents for authentication and authorization.

- Avoid unnecessary autonomy: deploying agentic behavior where it is not needed expands the attack surface without adding value. Unnecessary autonomy can quietly expand the attack surface and turn minor issues into system-wide failures.

- Implement a zero trust security framework: Decentralized architecture, varying autonomy, external tools, and uneven trust make perimeter-based security models ineffective.

- Secure agent channels: Use end-to-end encryption with per-agent credentials and mutual authentication.

- Prevent automatic re-ingestion of an agent’s own generated outputs into trusted memory to avoid self-reinforcing contamination or “bootstrap poisoning.”

- Monitoring and Visibility of AI Agent activities is foundational to achieving a strong security posture in operations.

- Require human approval for high-impact or goal-changing actions

- Sign and attest manifests, prompts, and tool definitions

- Require and operationalize SBOMs, AIBOMs

- Incorporate AI Agents into the established insider threat program

AI risk management and mitigations

Secure AI systems, including Agentic AI, implement security controls to mitigate attacks targeting the AI data and models. The selected controls should achieve confidentiality, integrity, availability, authentication, and authorization protections, which are the pillars of cybersecurity. Security controls are identified through good security process that starts with asset identification, then performs threat analysis, and then implements continuous risk assessment.

The NIST AI Risk Management Framework (RMF)3 provides helpful guidelines and a process for AI risk assessment that can be applied to AI in telecommunications networks. The process is built upon a foundation of Governance and Management of risk based upon measurement, prioritization, and impact across the entire AI development and operational lifecycle. At maturity, the Measure, Manage, and Map functions integrate into an iterative process. The NIST Cyber AI Profile4 applies the NIST Cybersecurity Framework for risk management across Govern, Identify, Protect, Detect, Respond, and Recover.

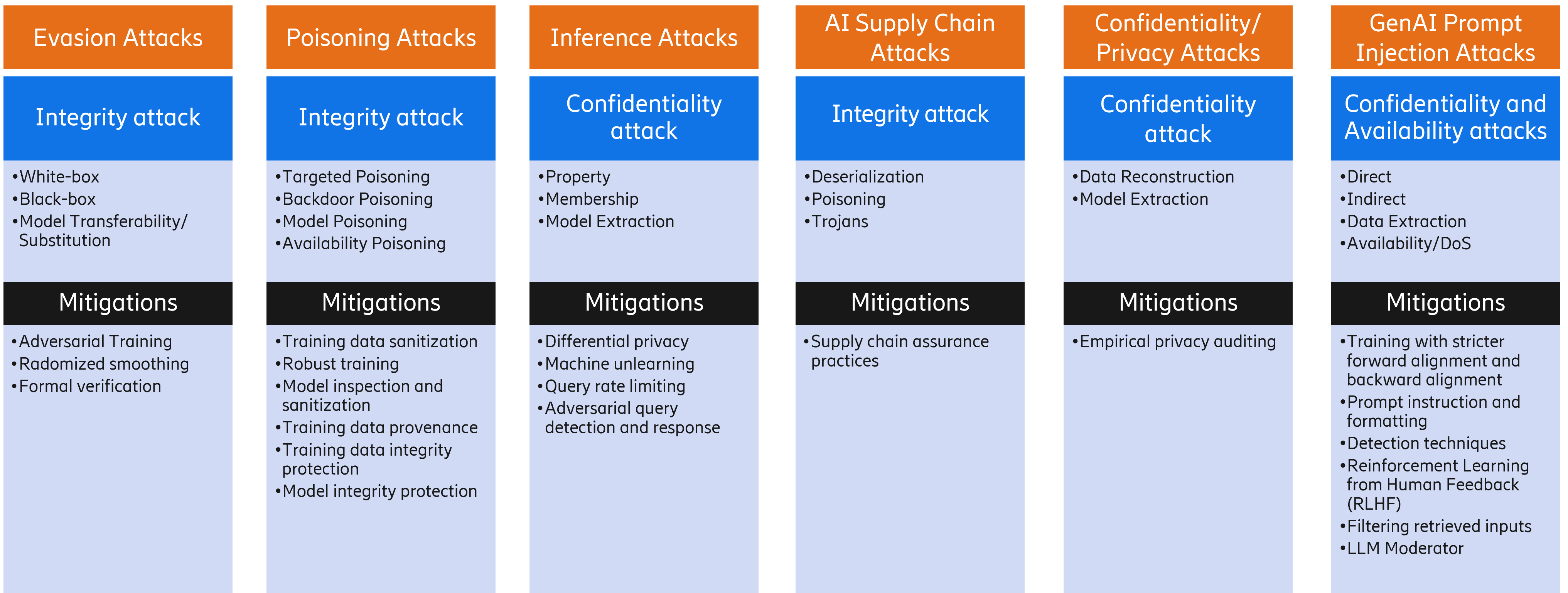

Types of AI-specific attacks are Evasion, Poisoning, Inference, Supply Chain, Privacy, and Prompt-Injection attacks. These attacks map to the traditional Confidentiality/ Integrity/ Availability (CIA) cybersecurity paradigm.

- Evasion attacks are attacks on integrity that modify the behavior of AI/ML models through specially crafted inputs.

- Poisoning attacks are attacks on integrity that contaminate training datasets or model parameters to insert backdoors or reduce model performance.

- Inference attacks are confidentiality attacks that extract insights about the underlying ML model or the training data through the ML model.

- AI Supply Chain attacks are attacks on integrity that exploit supply chain vulnerabilities inherited from traditional software supply chain and novel AI-specific supply chain attacks such as poisoned model repositories, dependency confusion in ML packages, and compromised pre-trained weights.

- Privacy attacks are attacks on confidentiality that exploit unprotected, or poorly protected, data and models for information disclosure.

- Prompt Injection attacks are attacks on confidentiality or availability that model instructions to bypass safeguards, exfiltrate data, force unintended actions, or cause denial of service.

For the Standardization stage in the mobile industry, AI security standards are to be specified by 3GPP, O-RAN Alliance, and ETSI. NIST and OWASP provide additional public guidance applicable to securing AI in mobile networks. The Development stage leverages a secure-by-design approach to implement software supply chain security and secure coding practices. The Deployment stage provides systems hardening with secure configurations and disabling unsecure protocols and unused ports. During the Operations stage, configuration drift is automatically alerted and continuous security monitoring is combined with AI-based analysis of data and logs to detect security incidents.

A summary of AI/ML attacks and mitigations across the evolving AI attack landscape is provided in Figure 3. Further discussion of these AI attacks can be found in7 and 8 and mitigation of these attacks on AI in mobile networks is discussed in9.

Figure 3. Evolving AI Attack Landscape detailing attacks and mitigations. Predictive AI and GenAI Attacks follow the traditional Confidentiality/Integrity/Availability (CIA) security paradigm.

NOTE: Traditional Identity and Access Management (IAM) controls for AI systems are also needed to prevent unauthenticated and unauthorized access to training data and models.

Practical view of implementation of Secure AI at Ericsson

Ericsson’s launch of Agentic rApp as a Service (aaS) introduces a new operating model for RAN automation to market. Its multi‑tenant offering is designed to accelerate each operator’s path toward higher autonomy and operational efficiencies. In this model, different rApps involved in the network optimization are delivered as a service, integrated with the Ericsson Intelligent Automation Platform (EIAP), and built to scale on AWS with cloud‑native services for data integration, analytics, and ML operations. The launch also introduces a step‑change in human interaction and orchestration: operators can move from manual workflows to automated GenAI and Agentic AI intent‑driven operations.

Ericsson’s rApp aaS is both cloud‑hosted powered by AWS and increasingly autonomous, making it essential to secure AI. The rApp aaS architecture emphasizes security fundamentals: data encryption at rest and in transit and multi‑tenant isolation so operators’ data and workloads remain separated inside Ericsson’s AWS‑hosted environment. It also calls out a critical GenAI requirement: data privacy and isolation from model providers, ensuring prompts, completions, and training data stay within the controlled environment and are not used to train or improve foundation models—a key safeguard against unintended data exposure. For operators that require private connectivity, the architecture allows private network options to keep sensitive RAN data flows off the public internet while meeting regional data privacy regulation.

Secure Agentic AI is not only about confidentiality—it’s also built upon a foundation of governance, control, and auditability as AI agents begin to decide and take operational actions. In the rApp aaS design, the Agentic AI layer is supported by policy‑based guardrails (e.g., approval workflows for higher‑risk operations) and end‑to‑end observability so autonomous decisions, tool invocations, and access patterns are logged and reviewable. Combined with least‑privilege access controls, continuous threat detection, and centralized security findings, these controls create a practical “trust envelope” around GenAI and Agentic AI. Operators can adopt intent‑driven automation faster, while maintaining the evidence, oversight, and compliance posture needed to protect customer data at scale.

The road ahead

Securing mobile communication infrastructure, including the AI components within, requires a cooperative approach across all the organizations involved in standardizing, developing, implementing, and operating the network. In addition to traditional controls, AI/ML systems will require specialized security controls to protect against AI-specific types of attacks. Ericsson has adapted the four layers trust stack to secure AI components within mobile telecommunication networks. As AI technologies become integral to mobile telecommunication systems, the sophistication of evolving cyber attacks is expected to rise. Security mitigations must be flexible, adaptive, and scalable as AI models grow in complexity and data volume. The evolution of Agentic AI introduces an expanded threat surface with the shift from static to dynamic risks that inherit the risks of PredAI and GenAI while introducing new risks from multi-agent systems. A prime example is Ericsson’s launch of Agentic rApp as a Service (aaS) with its built-in capabilities for secure AI in which Ericsson’s further maintains its security posture to support national security by securing critical infrastructure.

Ericsson will continue to align its AI-enabled products with evolving security guidance from NIST, OWASP, and other industry sources. Further security analysis will be needed to select, tailor, and implement mitigations for AI in mobile use cases. Ericsson will continue to lead AI security analysis in industry standards bodies.

- Ericsson: Protecting critical infrastructure today, November 2025

- Evolving the security posture of 5G networks. - Ericsson, April 2025

- Artificial Intelligence Risk Management Framework (AI RMF 1.0), AI RMF 1.0, NIST AI 100-1, NIST January 2023

- Cybersecurity Framework Profile for Artificial Intelligence, NIST IR 8596 irpd: Cybersecurity Framework Profile for Artificial Intelligence (Cyber AI Profile), Initial Preliminary Draft, December 2025

- Enhancing the security of communication Infrastructure, OECD digital economy papers, Organisation for Economic Co-Operation and Development (OECD), September 2023

- OWASP Top 10 for Agentic Applications for 2026 - OWASP Gen AI Security Project, OWASP, December 2025

- Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations, NIST AI 100-2e2023 NIST, January 2024

- Cybersecurity of AI and standardisation, ENISA, March 2023

- AI/ML cyber security in Telecommunication networks, 2024

Read more

AI agents in telecom network architecture - Ericsson, October 2025

Agentic AI: Pathway to autonomous network level 5 - Ericsson, July 2025

AI Product Security: A Primer for Developers - Ericsson, January 2025

AI/ML Security in mobile telecommunication networks – Ericsson, ai-ml-cyber-security-Ericsson, April 2024

Benefits of AI in security, safety and more – Ericsson, January 2024

OWASP | Agentic AI - Threats and Mitigations, February 2025

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.