Trust technologies for security assurance

One could argue that the question is too simplistic, yet it captures what people want and expect, and it impacts how successful the network platform will be. For us at Ericsson, who are facing this question every day, it boils down to not only implement security functions but also to providing security assurance, forming rational arguments as to why the network platform will be considered trustworthy, thereby answering the question – yes, it is secure.

Trust technologies for security assurance is one of the five key technology trends outlined by our CTO. In the process of addressing security questions for the network platform and its building blocks, we need to bring in new technologies in areas where we either lack solutions today, or where current solutions cannot offer an adequate level of security. Below, we will describe three powerful trust technologies that facilitate stronger security assurance arguments related to:

- AI-driven protection

- Confidential computing

- Secure identities.

AI-driven protection

Emerging networks, with richer capabilities, allow for new services and for a multitude of devices with varying needs and properties. At the same time, the use of virtualization and container technologies, causes services to increasingly use cloud computing and thus separate the aspects of processing from where the actual processing takes place.

Existing methodologies of traditional service-level agreements and perimeter-based defense do not function properly in this highly dynamic world of virtualized resources and dynamic micro-services. Instead, we need automated protection that works from within, intelligently adapts to the working conditions, and is capable of performing analytics of the system in operation.

Machine learning and artificial intelligence techniques are being applied to a wide range of security issues; to detect ongoing attacks based on network traffic or system logs, to detect intrusions or insider threats based on deviations in user behavior, or for threat intelligence.

An approach that is gaining popularity is to use unsupervised machine learning to detect attacks through their deviation from normal behavior, thereby opening the possibility to detect “zero day” (not previously seen) threats. Also based on anomaly-detection principles, user behavior analytics systems collect data about users accessing systems (time of day, which systems, data access) and attempts to detect intrusions or insider threats through deviations from historical use or reference groups.

Another example is user authentication, where the wealth of sensor data available from personal devices, like cell phones, is used as an additional authentication factor by building a model of a user’s typical behavior.

There are also efforts to assist security analysts by collecting and sifting through threat information and finding the most relevant information when an alert occurs. Natural language processing and other techniques are used to augment more traditional threat data with information from online documents.

We believe that machine learning and artificial intelligence will play an important role in securing billions of diverse connected devices – devices that in many cases will be physically exposed. While the current trend is to gather and centralize data into large data lakes, for 5G infrastructure protection we see a possible role for a more distributed and possibly hierarchical approach, where fast local decisions need to be made while slower global decisions influence local policies. The engineering of such systems might benefit from architectures such as distributed cloud, with a symbiosis of edge computing and traditional cloud computing, and from a service-based view on the network functions.

At Ericsson Research Security, we are applying machine learning and formal methods to proactively verify the security properties of a cloud setup. This provides efficient tools to provide security assurance guarantees. For more detail, please take a look at some of these links: Ericsson Technology Review articles on Securing the cloud with compliance auditing, Security expectations drive cutting edge research in the cloud, bringing compliance to the cloud web page and an NDSS symposium paper on runtime verification of VM-level network isolation.

Confidential computing

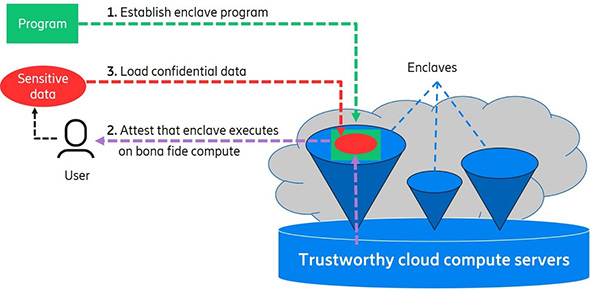

Another important development in security is the advances of trusted execution environments into what is now called enclave technologies. The concept of enclaves is to have execution environments where code and data is kept isolated and protected through hardware-enforced mechanisms. The isolation also uses encryption and data integrity so that other enclaves and processes in other environments cannot read or tamper with code and data in a particular enclave.

Fig 2: Confidential cloud computing on the basis of enclave technology

With these hardware technologies we can realize confidential computing – creating processing services in a cloud infrastructure that can process data with strong guarantees that both data and processing are kept confidential. Although this technology is rapidly getting mature, the system integration in cloud processing has still to happen, partly because of technical limitations in the first-generation hardware, but also because cloud providers and their customers want an enclave solution that is generic.

We believe that hardware-based security, trusted computing, and the potential of secure enclaves, form the basis for building security in future networks – especially in the virtualized and distributed systems where enclaves serve as trustworthy anchor points in the cloud for executing mission critical code and process sensitive and confidential data.

This motivates us at Ericsson Research Security to investigate methods for using enclaves – for example it is used for privacy-preserving analytics, an effort in a collaboration with UC Berkeley RISELab.

Secure identities

Now we come to the last technology we want to highlight. It’s about secure digital identities. Such identities are crucial to maintain ownership of data, to authenticate users and systems when interacting with other systems, and to enforce correct authorization. Sure, this is not new, but the use of web and cloud technologies is making the need for efficient identity management solutions a key question.

In mobile networks, we have the SIM AKA-based solutions using hardware-based technologies for protecting the keys. This has worked very well in the past and will continue to do so in many 5G use cases. However, we also see a need for 5G to cater for solutions addressing the different needs of machine-to-machine and low-cost IoT systems. This is reflected in the generic authentication framework defined for 5G by 3GPP SA3, which you can read about in our white paper 5G security – enabling a trustworthy 5G system. This new framework allows for different types of credentials such as certificates, pre-shared keys, or token cards in addition to traditional SIM cards and more recent advances such as the iUICC – a solution defined by ETSI for integrating the SIM as a subsystem on the hardware of a device.

We also need identities for the operation and orchestration of the network. To securely connect micro-services into an overall network service implicitly assumes that there are identities in place for securing the communication among the micro-services and to protect the user of their APIs. In particular, when 3GPP introduces the service-based architecture we need efficient means to orchestrate identities to many more subsystems than ever before.

Coupled to the increased use of security in applications we are facing new requirements on latency, overhead, and processing. The 5G systems are expected to work with massive amounts of low-cost IoT devices and in applications with extreme demands on latency. Protocols are also expected to provide perfect forward secrecy, and there is active development of new and updated authentication protocols to meet these demands, such as TLS 1.3 and QUIC, EAP-TLS, OSCORE and EDHOC.

We at Ericsson Research Security have long been and are still intensively engaged in the standardization of new, secure and efficient protocols for the network platform. Much of this work is described in these earlier blog posts about IMSI catchers, evolving focus internet security, IETF 2012, and IoT security.

But our research efforts also extend to what lies ahead. If large enough quantum computers are built, existing identity management systems using public-key cryptography will lose most of their security and we will need to replace them by new algorithms. The 128-bit symmetric keys used in cellular systems since 3G will not be practical to attack with quantum computers, but for asymmetric solutions there is ongoing work by NIST to define cryptographic algorithms that can withstand attacks from quantum computers.

Summary

Security is a topic that has climbed high on the agenda all over the world – whether in the regulatory domain, in building critical infrastructure, with the general public – in enabling the potential of a connected society.

As always, security of a system as a whole comes in terms of technical solutions, implementation aspects, processes and different means for detection and recovery. We have discussed three technologies that we believe to have key impact when it comes to define and assure the security of the future network platform – to meet various demands and ultimately be able to give an affirmative answer to the question “is it secure – yes, it is”.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.