How AI-enabled router configuration improves QoS in 5G networks

Quality of Service (QoS) support is needed in 5G to provide differentiated services to multiple types and tiers of customers. For instance, the requirements on latency and reliability needed for a connected robotic control application is vastly different from that of a music streaming application. However, both these applications may share the same network resources, requiring efficient scheduling and prioritization.

Network slicing is a way to meet the requirements of particularly demanding use cases. Slicing has been proposed in the 5G context to meet diverse requirements of ultra-reliable low latency communication (URLLC), massive machine-to-machine communication (MMtC) and enhanced mobile broadband (eMBB).

5G use cases will have varying traffic conditions: traffic distributions per network slice and the number of users per service can vary dynamically over time. Overprovisioning resources may not be advisable in all cases, to reduce deployment costs and optimally utilize the spectrum. To provide stringent QoS support as specified by 5G differentiated services, the configurations of the queue management and port configurations must be resilient to changes in traffic patterns.

A key resource in meeting these requirements are routers that perform efficient queue management, congestion control and flow prioritizations for effective network slicing. Configuring routers has traditionally been an expert-driven process with static or rule-based configurations for individual flows. However, in dynamically varying traffic conditions, as are proposed in 5G use cases, these traditional approaches can generate sub-optimal configurations.

The problems with current approaches include:

- Hardcoded rules are not scalable

- Policies may be infeasible or sub-optimal to current conditions

- New problems previously not seen cannot be tackled.

We propose a solution to this problem based on model-based Reinforcement Learning (RL). Techniques such as RL have been exploited in routing traffic over networks, but automated configuration of internal port queues within routers/switches is a relatively unexplored area.

For example…

Fig 1. 5G Slice bottleneck in router port

Fig 1 illustrates a scenario where the end-to-end network slice was unable to meet the service level QoS objectives. Using a diagnosis tool, we could identify the cause to be a statically configured edge router.

The objective of our work is to automatically reconfigure the port queue to alleviate this bottleneck.

Router Queue Modelling

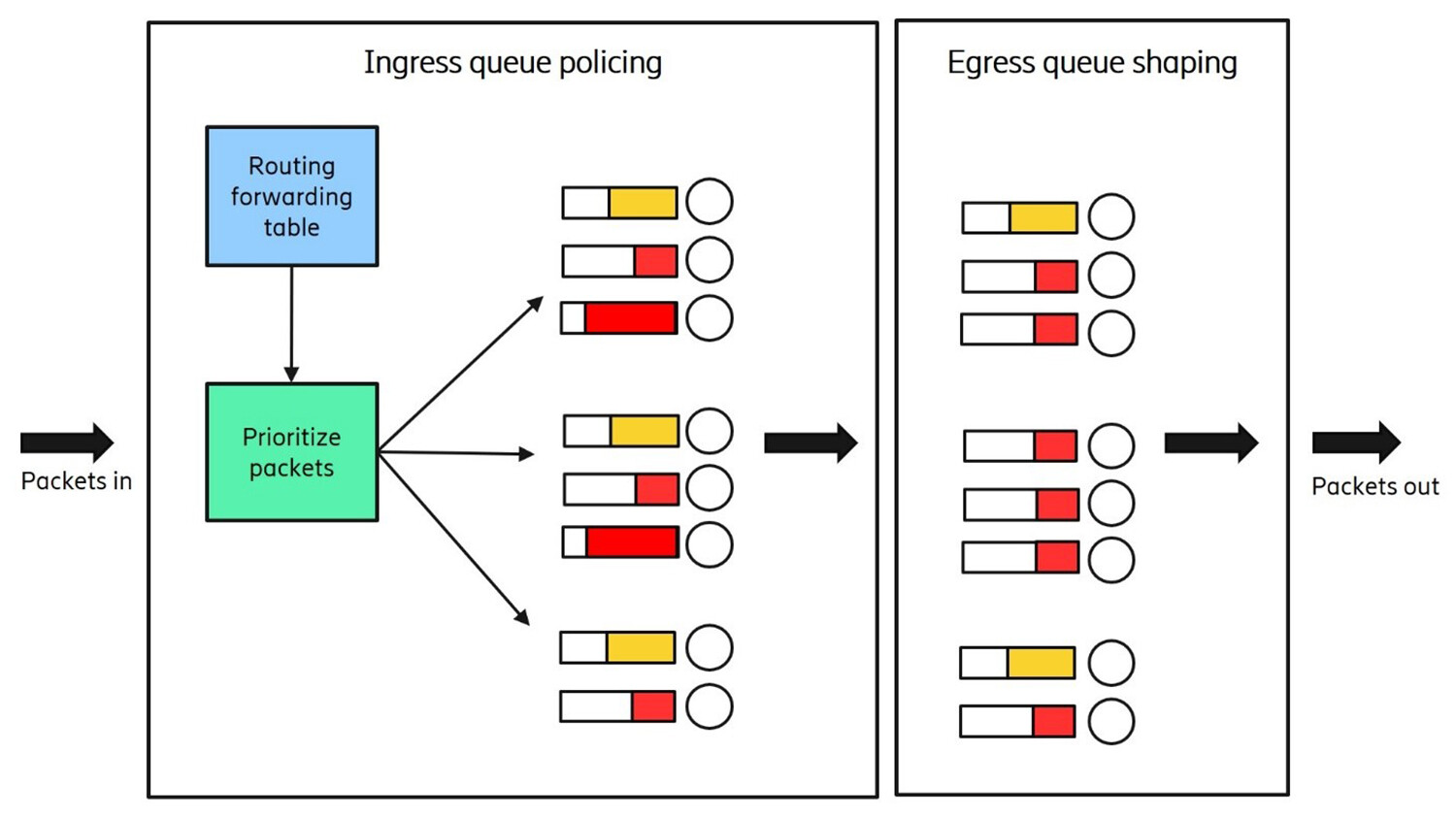

A router typically has two types of network element components organized onto separate processing planes, i.e., Control Plane and Forwarding Plane. Both switch and router interfaces have ingress (inbound) queues and egress (outbound) queues. An ingress queue stores packets until the switch or router CPU can forward the data to the appropriate interface. An egress queue stores packets until the switch or router can serialize the data onto the destination address.

Fig 2. Router queue policing and shaping

Congestion occurs when the rate of ingress traffic is greater than can be successfully processed and serialized on an egress interface.

To study the effects of changes in router configurations, an elaborate queueing network model is used, that evaluates the ingress/egress queues within the router. The effect of various configuration and traffic changes on observed outputs like packet drop rates, latency, and throughput are included in the model. This is crucial as live network data will have to be evaluated for configuration changes within the simulation environment.

Solution overview: Reinforcement Learning Modelling for Router Port Configuration

Fig 3. Model-based Reinforcement Learning for port queue configuration

Figure 3 highlights the various aspects of our framework:

- Queueing Model Simulator: In order to study the effects of changes in router configurations, an elaborate queueing network model that evaluates queues within the router is used.

- Port Configuration RL Agent Training: A Partially Observable Markov Decision Process (POMDP) is derived using the conditional probabilities of an action affecting state, observations and rewards from the queuing model. The reinforcement learning agent is trained to take actions to provide optimal port queue configurations.

- Optimal Configuration Deployment: The trained policy is deployed over the observed router traffic and is shown to provide fair, prioritized allocation of resources to queues, that prevents the possibility of bottlenecks.

For full details of the solution, please refer to our paper “Automated Configuration of Router Port Queues via Model-Based Reinforcement Learning”.

Example output: Ingress queue policing policy

To demonstrate the effect of applying the Reinforcement Learning framework on a sub-optimally configurated port, a queueing network simulation model was made to replicate the 5G slice bottleneck scenario. Figure 4 shows that the utilization and measured queue length for queue 7 are high, despite queue 1 having lower than usual utilization. Uneven traffic distribution was observed in other queues as well.

The objective was to generate a policy that would alleviate this load, while maintaining priorities of individual flows within the system.

Fig 4. Ingress queue simulation outputs

A POMDP model was trained to generate a policy that can appropriately reconfigure the system. The rewards were configured to reward improvements in observed throughput, residence times and packet drop rates. This policy was implemented over the router model in Figure 5 and shown to significantly improve the fair use of all queues, despite varying traffic patterns.

Fig 5. Improvement in observed queue length after policy deployment

Conclusions

Static configurations of edge and aggregation routers used within 5G networks rely on human experts and are unable to dynamically modify or learn from errors in deployment. Our work has presented an automated technique for router port configurations that are adaptive to changes in traffic patterns and user requirements.

Through accurate modeling of the ingress and egress queues, policies are generated using Partially Observable Markov Decision Processes. The research described in this post has shown this to be effective in traffic policing and shaping for a range of router configurations including changing priorities, weights, queueing disciplines, drop rates and bandwidth limitation. Our results were tested on an actual Ericsson deployment use cases with router configurations.

We believe such a framework of AI-based configuration of networking elements will be commonplace in future to dynamically learn, act and scale 5G and 6G network routers and switches.

References

Java Modeling Tools (JMT) Queueing Network Simulator

Learn more

Read the full paper by Ajay Kattepur, Sushanth David & Swarup Mohalik: “Automated Configuration of Router Port Queues via Model Based Reinforcement Learning”, Data Driven Intelligent Networking Workshop, International Conference on Communications (ICC), 2021.

Read more about the Ericsson 6000 series.

Read our report Artificial intelligence and machine learning in next-generation systems.

Here’s what you need to know about how to implement automation in 5G transport networks.

RELATED CONTENT

Like what you’re reading? Please sign up for email updates on your favorite topics.

Subscribe nowAt the Ericsson Blog, we provide insight to make complex ideas on technology, innovation and business simple.